Agentic Server-Side Tool Calling

A new Agentic framework is emerging, one where AI Agents are lightweight and orchestrated in an Agentic Workflow.

The AI Agents form an Agentic Workflow or mesh, each with one or more specific tasks, plus one or more triage or classification agents.

The orchestration of multiple AI Agents also provides the opportunity to leverage specific and specialised language models for particular tasks along the way.

So the approach is that you have a grid or workflow which acts as an underlying guidance mechanism, and the Agentic nodes (AI Agents) act as the nodes of autonomy.

OpenAI Agent Builder

& AgentKit from OpenAIcobusgreyling.medium.com

The balance this brings is immense, as there is some guidance mixed with autonomy.

There are, however, two options available to builders.

The first is to run your Agentic Workflow in a framework like Kore.ai, LangChain, etc.

This gives you a very granular approach in terms of managing your flow, and hard-coding certain aspects should you want to.

There are examples where a language model is fine-tuned to optimise which tool to select. So the Agentic Flow and tool selection can really be built out in a flow.

The second option is to have a more compact approach, making full or partial use of an SDK provided by one of the LLM supplier companies.

And part of the SDK offering is to select one of a number of tools within the SDK execution routine.

There are advantages to taking this avenue and going for a more confined approach. You can also make use of this option to create an intelligent API with a host of functionality.

In this article, I’ll look at the xAI approach to performing server-side tool calling.

Using xAI as an example…

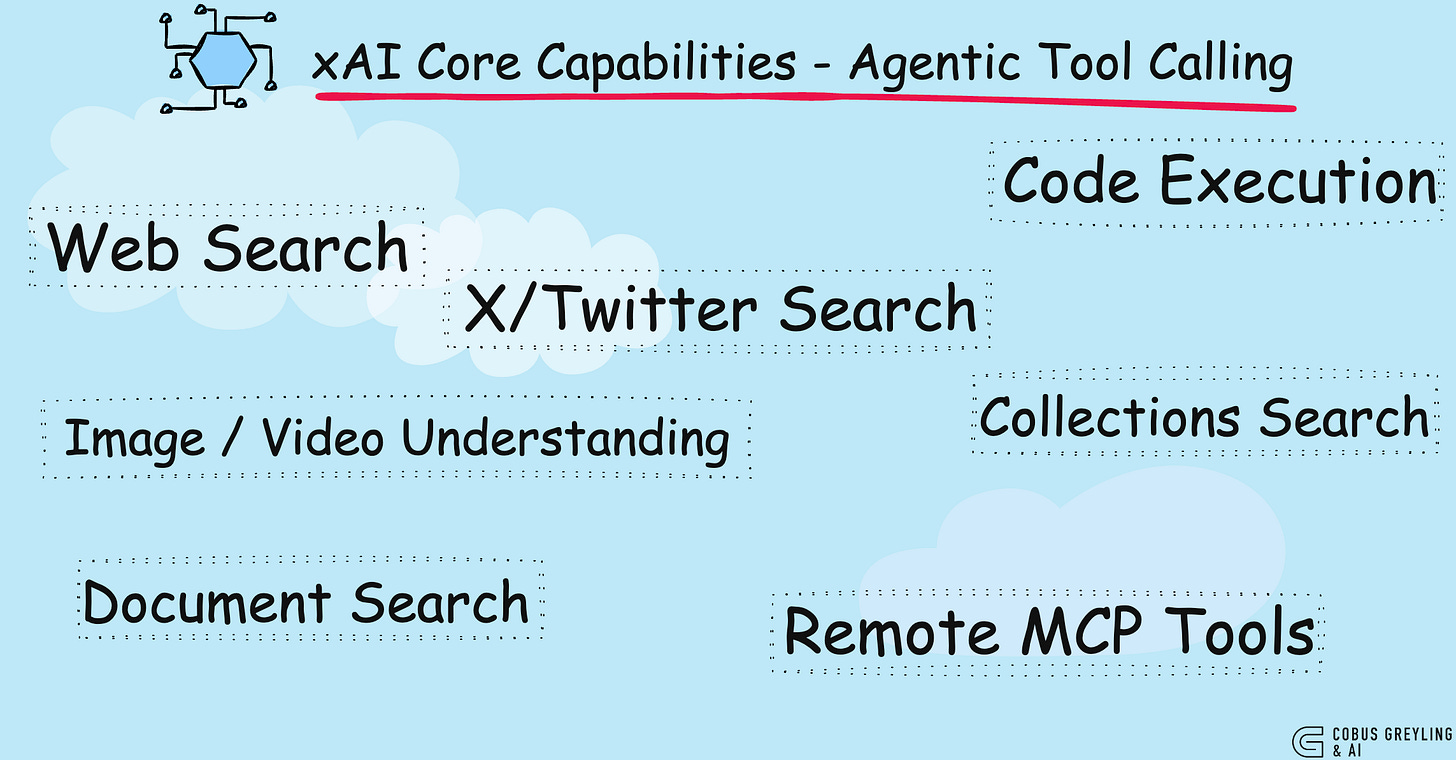

The xAI API supports agentic server-side tool calling, which enables the model to autonomously explore, search and execute code to solve complex queries.

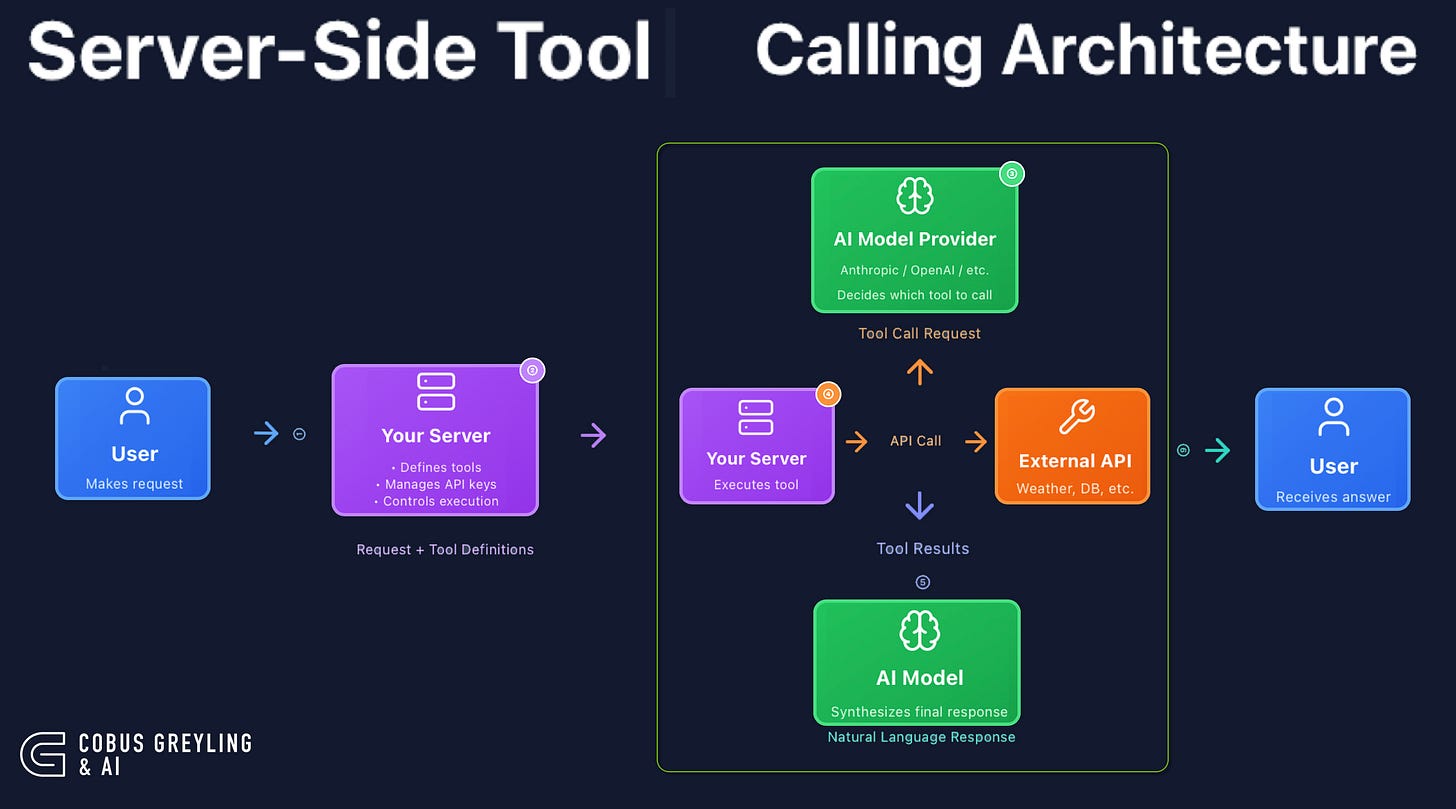

Unlike traditional tool-calling where clients must handle each tool invocation themselves, xAI’s agentic API manages the entire reasoning and tool-execution loop on the server side.

Agentic requests are priced based on two components: token usage and tool invocations.

Since the agent autonomously decides how many tools to call, costs scale with query complexity.

When you provide server-side tools for a request, the xAI server handles everything autonomously through an intelligent reasoning loop.

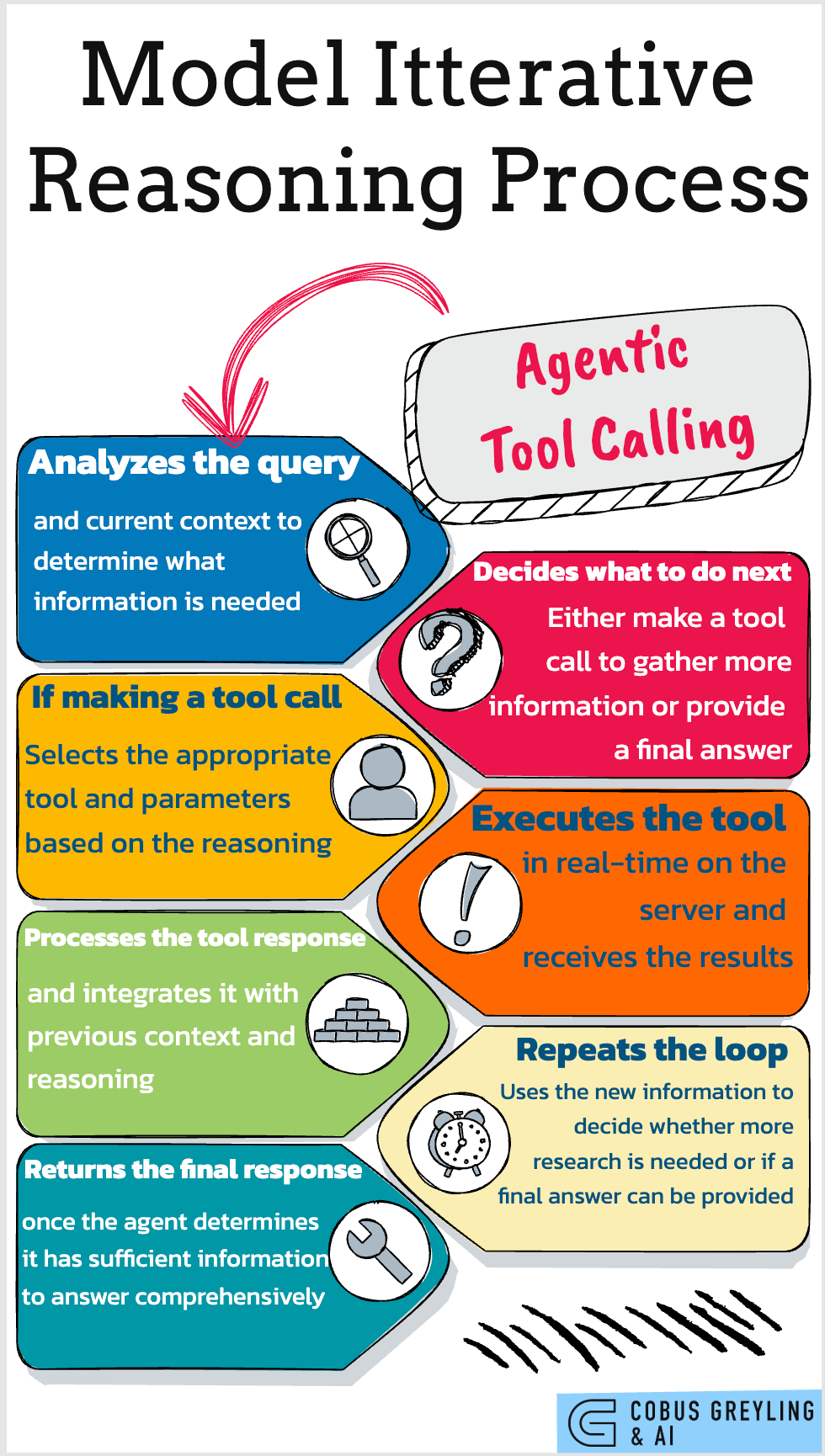

The model acts as an agent that independently researches, analyses, and crafts a response.

It starts by assessing the query and context to identify needed information, then decides whether to call a tool or deliver a final answer.

If a tool is required, it selects the right one with parameters, executes it in real-time, integrates the results, and loops back — repeating as needed until it has enough data for a comprehensive reply.

This setup powers seamless multi-step investigations behind the scenes, with the client receiving just the polished final output, plus optional streaming updates like tool notifications for transparency.

Below a Python notebook, where agentic server-side tool execution is illustrated…

# Cell 1: Install the xAI SDK if not already installed

!pip install xai-sdkBelow you can see the three tools defined…web search, X (twitter) search and code execution. Analogous to OpenAI’s free form functions…

# Cell 2: Import necessary modules

import os

from google.colab import userdata

from xai_sdk import Client

from xai_sdk.chat import user

from xai_sdk.tools import web_search, x_search, code_execution

The client is created, with the three tools visible here…

# Cell 3: Create the client and chat session

client = Client(api_key=”Your xAI API Key”)

chat = client.chat.create(

model=”grok-4-1-fast”, # reasoning model

# All server-side tools active

tools=[

web_search(),

x_search(),

code_execution(),

],

)# Cell 4: Append user message and stream the response

chat.append(user(”What are the latest updates from xAI?”))

is_thinking = True

for response, chunk in chat.stream():

# View the server-side tool calls as they are being made in real-time

for tool_call in chunk.tool_calls:

print(f”\nCalling tool: {tool_call.function.name} with arguments: {tool_call.function.arguments}”)

if response.usage.reasoning_tokens and is_thinking:

print(f”\rThinking... ({response.usage.reasoning_tokens} tokens)”, end=”“, flush=True)

if chunk.content and is_thinking:

print(”\n\nFinal Response:”)

is_thinking = False

if chunk.content and not is_thinking:

print(chunk.content, end=”“, flush=True)

print(”\n\nCitations:”)

print(response.citations)

print(”\n\nUsage:”)

print(response.usage)

print(response.server_side_tool_usage)

print(”\n\nServer Side Tool Calls:”)

print(response.tool_calls)And below the response…

Calling tool: x_keyword_search with arguments: {”query”:”from:xai”,”mode”:”Latest”}

Thinking... (583 tokens)

Calling tool: x_keyword_search with arguments: {”query”:”from:xai”,”mode”:”Top”}

Thinking... (583 tokens)

Calling tool: x_semantic_search with arguments: {”query”:”xAI updates announcements releases”,”usernames”:[”xai”],”min_score_threshold”:0.2}

Thinking... (583 tokens)

Calling tool: x_user_search with arguments: {”query”:”xAI”,”count”:5}

Thinking... (583 tokens)

Calling tool: browse_page with arguments: {”url”: “https://x.ai/”}

Thinking... (583 tokens)

Calling tool: web_search with arguments: {”query”:”xAI latest news updates 2025 site:x.ai OR @xai”,”num_results”:15}

Thinking... (1403 tokens)

Final Response:

**Latest updates from xAI (as of December 5, 2025):**

### November 19, 2025: Grok 4.1 Fast & xAI Agent Tools API

- xAI launched **Grok 4.1 Fast**, their top tool-calling model with a 2M-token context window. Optimized for enterprise tasks like customer support and deep research via long-horizon RL training and simulated environments.

- Tops agentic search benchmarks with native X integration, code execution, and web browsing.

- **Agent Tools API** released: Enables Grok to autonomously browse the web, search X posts, execute code, retrieve documents, etc., with minimal code.

- Free for 2 weeks (Grok 4.1 Fast on OpenRouter; all Agent Tools). API pricing: $0.2/1M input tokens, $0.5/1M output.

- [Details](https://x.ai/news/grok-4-1-fast) | [X Thread](https://x.com/xai/status/1991284813727474073)

### November 19, 2025: Partnership with Saudi Arabia (KSA) & HUMAIN

- Landmark deal: xAI partners with @HUMAINAI to deploy Grok nationwide—the first national-scale adoption.

- xAI to build next-gen hyperscale GPU data centers in the Kingdom.

- [Details](https://x.ai/news/grok-goes-global/) | [X Post](https://x.com/xai/status/1991224218642485613)

### November 17, 2025: Grok 4.1 Release

- **Grok 4.1** rolled out to all users on grok.com, X, iOS/Android apps (Auto mode or selectable).

- Excels in conversational intelligence, emotional understanding (EQ-Bench: 1586), empathy; users preferred it 65% over prior models in blind tests.

- [Details](https://x.ai/news/grok-4-1) | [X Post](https://x.com/xai/status/1990530499752980638)

### Recent Highlights (Homepage & Ongoing)

- **SuperGrok Heavy**: New subscription tier on Grok.com unlocks Grok Heavy model + higher rate limits.

- xAI API enhancements (e.g., Live Search, image gen) continue; hackathon announced Nov 10 for devs with early Grok/X API access.

- No major announcements since Nov 19 per @xai posts/news. Recent buzz includes internal work culture discussions (e.g., celebrated 36-hour shifts) and denied $15B funding rumors.

Follow [@xai](https://x.com/xai) or [x.ai/news](https://x.ai/news) for real-time updates.

Citations:

[’https://www.aol.com/articles/xai-employees-celebrate-colleague-said-161643316.html’, ‘https://x.com/i/status/1991284822820720732’, ‘https://www.livemint.com/news/trends/indianorigin-xai-leader-defends-36-hour-shift-claim-at-elon-musk-s-ai-startup-let-us-cook-11764467188193.html’, ‘https://x.com/i/status/1990530503129391571’, ‘https://x.com/i/status/1987696575314104339’, ‘https://x.com/i/user/1661523610111193088’, ‘https://x.com/i/status/1991284832220188851’, ‘https://x.com/i/status/1991284828667527322’, ‘https://www.businessinsider.com/xai-employees-work-life-balance-celebrate-36-hour-shift-2025-12’, ‘https://x.com/i/user/150543432’, ‘https://x.com/i/user/1603826710016819209’, ‘https://x.com/i/status/1990530505209754056’, ‘https://x.com/i/status/1877536836924424445’, ‘https://x.com/i/status/1981865619176788279’, ‘https://x.com/i/status/1827151960300007434’, ‘https://x.com/i/status/1858945880076046656’, ‘https://x.com/i/status/1990530499752980638’, ‘https://timesofindia.indiatimes.com/technology/tech-news/elon-musks-xai-top-exec-ayush-jaiswal-reacts-to-colleagues-post-on-working-36-hours-with-no-sleep-says-dont-be-angry-with-/articleshow/125684351.cms’, ‘https://x.com/i/status/1991284833402908722’, ‘https://x.com/i/status/1991284824594870293’, ‘https://x.com/i/status/1991284818928366015’, ‘https://x.com/i/status/1981917247095685441’, ‘https://x.com/i/user/1019237602585645057’, ‘https://x.ai/news’, ‘https://x.com/i/status/1858942919685865501’, ‘https://x.ai/’, ‘https://x.com/i/status/1925244461875175616’, ‘https://x.com/i/status/1991284830420758687’, ‘https://x.com/i/status/1903098565536207256’, ‘https://www.hindustantimes.com/trending/us/indianorigin-exec-at-elon-musk-s-xai-reacts-after-techie-works-36-hours-straight-with-no-sleep-101764461313348.html’, ‘https://x.com/i/status/1868045132760842734’, ‘https://stocktwits.com/news-articles/markets/equity/elon-musk-xai-15-billion-fund-gpu-purchases/cLP9ISdRE3y’, ‘https://x.com/i/status/1991284813727474073’, ‘https://x.com/i/status/1991224224313487696’, ‘https://x.com/Boss7502/status/1995478720618856718’, ‘https://x.com/i/user/1875560944044273665’, ‘https://x.com/i/status/1975608017396867282’, ‘https://x.com/i/status/1853505214181232828’, ‘https://x.com/xai’, ‘https://x.com/i/status/1991224218642485613’]

Usage:

completion_tokens: 864

prompt_tokens: 15353

total_tokens: 17620

prompt_text_tokens: 15353

reasoning_tokens: 1403

cached_prompt_text_tokens: 4167

server_side_tools_used: SERVER_SIDE_TOOL_X_SEARCH

server_side_tools_used: SERVER_SIDE_TOOL_X_SEARCH

server_side_tools_used: SERVER_SIDE_TOOL_X_SEARCH

server_side_tools_used: SERVER_SIDE_TOOL_X_SEARCH

server_side_tools_used: SERVER_SIDE_TOOL_WEB_SEARCH

server_side_tools_used: SERVER_SIDE_TOOL_WEB_SEARCH

{’SERVER_SIDE_TOOL_X_SEARCH’: 4, ‘SERVER_SIDE_TOOL_WEB_SEARCH’: 2}

Server Side Tool Calls:

[id: “call_34623844”

type: TOOL_CALL_TYPE_X_SEARCH_TOOL

function {

name: “x_keyword_search”

arguments: “{\”query\”:\”from:xai\”,\”mode\”:\”Latest\”}”

}

, id: “call_90839583”

type: TOOL_CALL_TYPE_X_SEARCH_TOOL

function {

name: “x_keyword_search”

arguments: “{\”query\”:\”from:xai\”,\”mode\”:\”Top\”}”

}

, id: “call_46768945”

type: TOOL_CALL_TYPE_X_SEARCH_TOOL

function {

name: “x_semantic_search”

arguments: “{\”query\”:\”xAI updates announcements releases\”,\”usernames\”:[\”xai\”],\”min_score_threshold\”:0.2}”

}

, id: “call_71694125”

type: TOOL_CALL_TYPE_X_SEARCH_TOOL

function {

name: “x_user_search”

arguments: “{\”query\”:\”xAI\”,\”count\”:5}”

}

, id: “call_66312245”

type: TOOL_CALL_TYPE_WEB_SEARCH_TOOL

function {

name: “browse_page”

arguments: “{\”url\”: \”https://x.ai/\”}”

}

, id: “call_67095239”

type: TOOL_CALL_TYPE_WEB_SEARCH_TOOL

function {

name: “web_search”

arguments: “{\”query\”:\”xAI latest news updates 2025 site:x.ai OR @xai\”,\”num_results\”:15}”

}

]Agentic server side tool calling opens up a new world of defining levers and more intelligence behind the API…but there is a level of control is ceded to the model. Where the model decides which tool to use when.

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.

COBUS GREYLING

Where AI Meets Language | Language Models, AI Agents, Agentic Applications, Development Frameworks & Data-Centric…www.cobusgreyling.com