AI Agent Architectures

Efficiency Gains Meet Scaling Limits

In Short…

A short while ago I wrote an article on the tree emerging AI Agent Architectures.

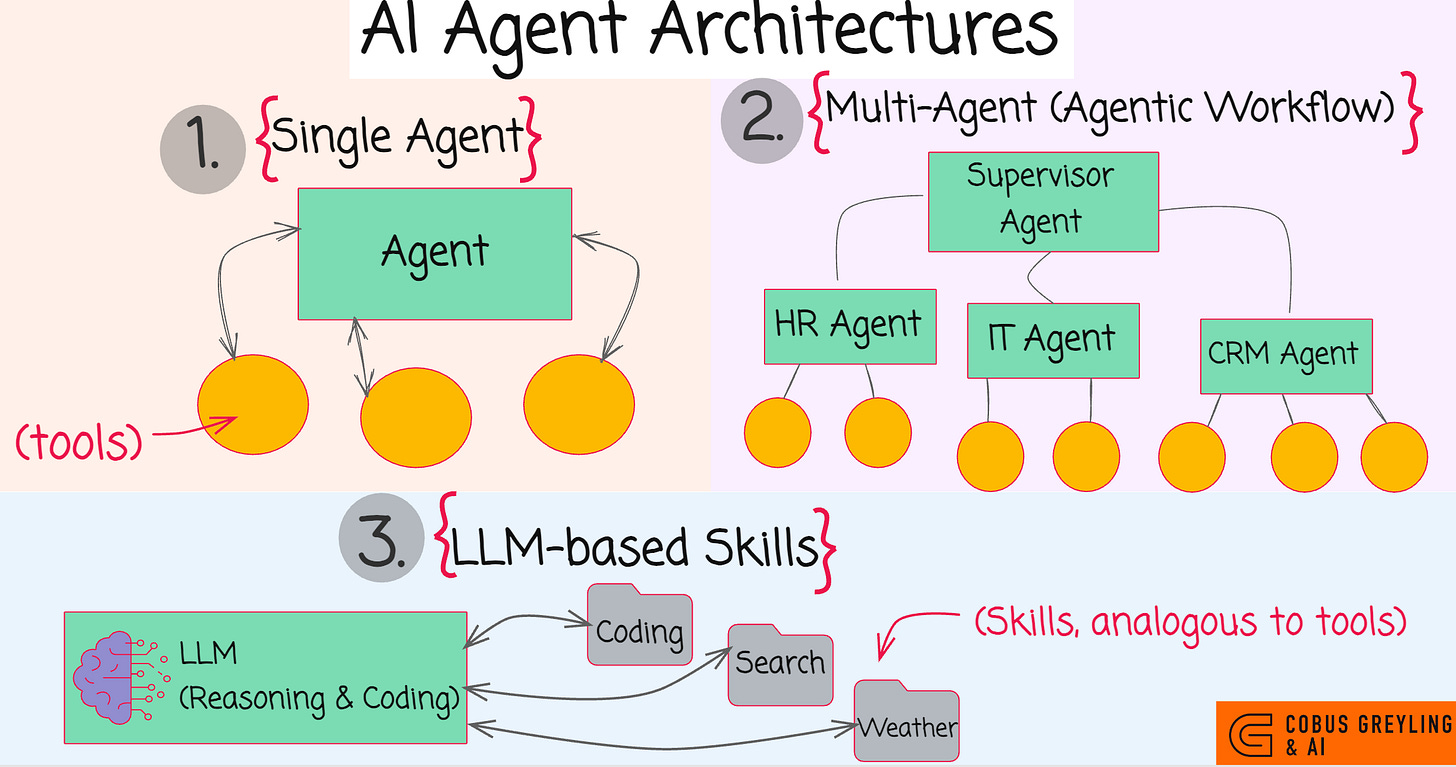

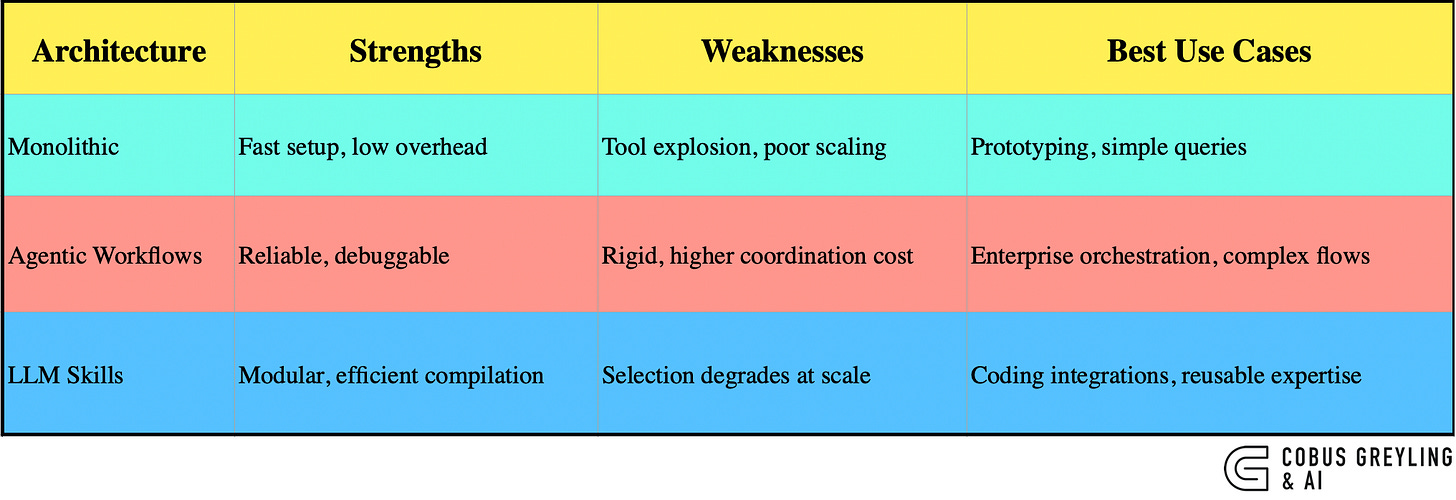

The three AI Agent architectures are (1) Monolithic Single Agent with tools, (2) Agentic Workflows and (3) LLM Skills.

Single-agent systems with tools deliver substantial efficiency over multi-agent setups for sequential tasks.

But yet face sharp scaling drops beyond capacity thresholds.

Hybrids combining workflows and modular skills emerge as the practical path forward in 2026.

LLM-based skills introduces a new dynamic. Especially considering coding/universal AI Agents.

Single AI Agents are faster and cheaper.

But, for complex tasks, skill selection accuracy plummets.

Hierarchical routing (Agentic Workflows) mitigates this by organising skills into categories, restoring reliability.

Supporting Analysis

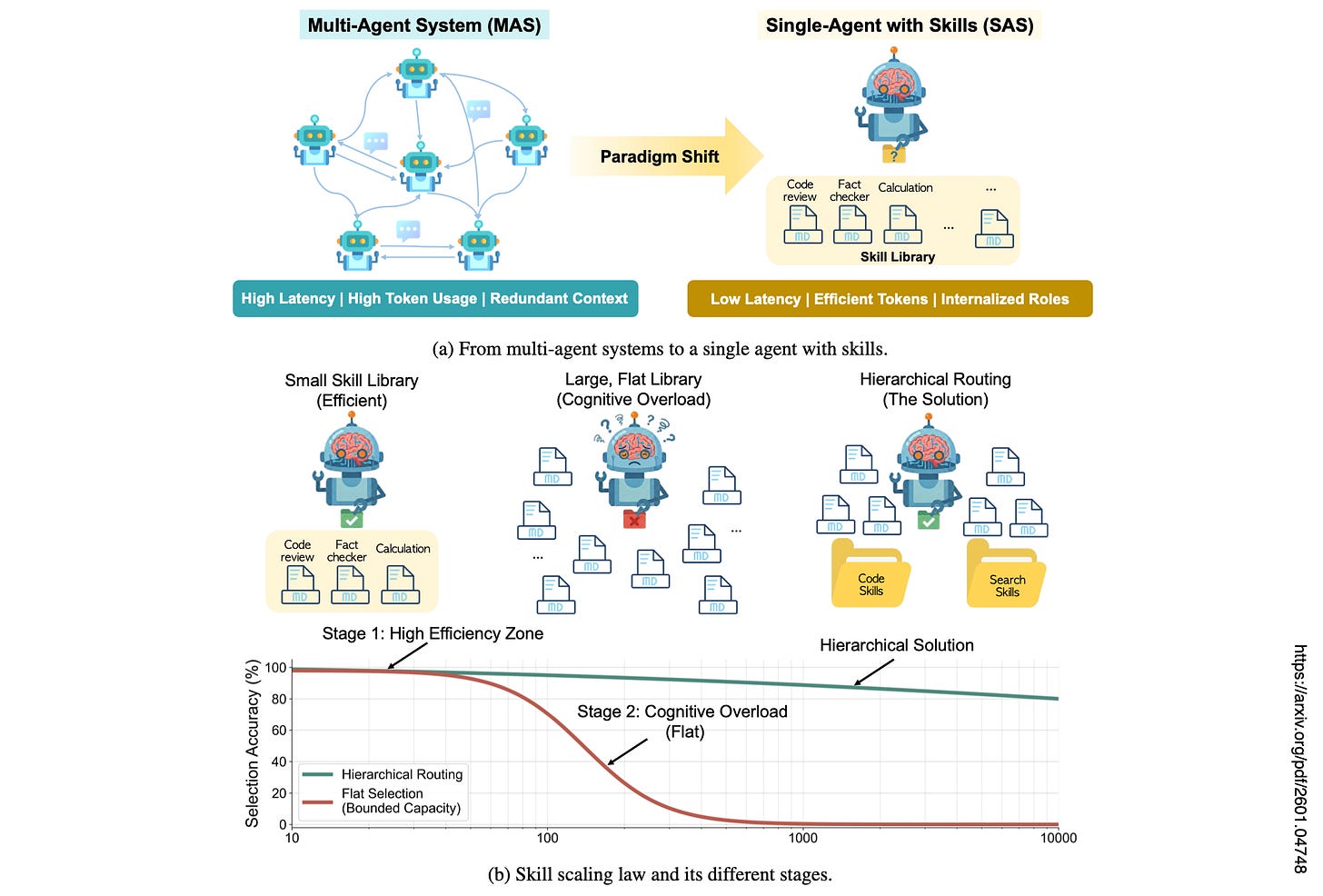

Recent research demonstrates that compiling multi-agent systems (MAS / Agentic Workflows) into single-agent with skills (SAS) reduces token usage by 54% and latency by 50% while matching accuracy on popular benchmarks.

This compilation internalises agent behaviours as selectable skills, eliminating communication overhead.

However, skill selection accuracy plummets non-linearly as libraries exceed 50–100 entries due to semantic confusability, mirroring human cognitive bounds.

Hierarchical routing mitigates this by organising skills into categories, restoring reliability.

From my Medium post, three architectures stand out…

Monolithic single agents with tools for quick prototyping,

Agentic workflows for structured enterprise reliability, and

Skill-based extensions like Anthropic skills for composable expertise.

Hybrids (Agentic Workflows) dominate production, blending orchestration with dynamic skills to balance flexibility and control.

The Three Core Architectures

My analysis highlights how AI Agents evolved beyond simple chatbots into goal-oriented systems, with no universal winner — choice depends on task complexity, cost, and scalability needs.

1. Monolithic Single Agent with Tools

A powerful LLM serves as the central brain, augmented by external functions like web search or code execution. It reasons step-by-step, invokes tools via structured calls, and iterates based on observations.

Best for rapid prototyping in simple scenarios with 10–20 tools maximum; beyond that, context overload reduces reliability.

2. Agentic Workflows (Hybrids)

Multiple lightweight, specialised agents form a directed graph, each handling subtasks such as planning or critique.

Frameworks like LangGraph or OpenAI’s AgentKit enable visual composition, conditional flows, and debugging.

Suited to enterprise production for predictability and parallelism, using smaller models per node to control costs.

3. LLM Skills

A strong core LLM gains modular capabilities through reusable packages of instructions, scripts, and templates, loaded dynamically (Skills).

Anthropic’s approach exemplifies this, with skills as schema-bound operations for reasoning subtasks, blending tool-like execution with agent-like behaviours.

Insights from Recent Research

Compilation from multi-agent systems (MAS) to single-agent with skills (SAS) delivers efficiency for compiled architectures (SAS), but hits scaling limits in skill selection; hierarchical routing resolves these capacity issues.

Compilation Results (SAS)

Faithful Performance

Architectures such as pipelines compile reliably.

Accuracy matches or improves slightly (+0.7% average), showing a 4% gain from unified context enabling better information flow.

Efficiency Gains

Reduces API calls from 3–4 to 1.

Token consumption drops by 53.7% average; latency falls by 49.5% average.

Key Properties

Ensures behavioural fidelity by preserving output distributions.

Achieves cost efficiency by replacing communication overhead with selection costs.

Compilation Process

Decomposes AI Agent roles into discrete capabilities.

Assigns execution backends (internal prompts or external tools).

Internalises communication topology through output constraints to maintain functional equivalence.

Scaling Limits

The skill scaling hypothesis draws from cognitive science, LLM skill selection parallels human decision capacity limits.

Experiments on libraries of 5–200 skills reveal stable accuracy below a threshold followed by a sharp phase transition drop (see the image below).

Degradation stems from semantic overlap among skills rather than library size alone.

Mitigation Approach (MAS-to-SAS compilation process)

Hierarchical routing organises skills into coarse categories (e.g. math or retrieval) before fine selection.

Boosts accuracy by 37–40% in large libraries.

Aligns with human chunking methods for handling complex choices.

Anthropic’s Skills

Anthropic’s approach leverages large LLMs like Claude for superior reasoning that smaller models cannot match.

A single huge LLM provides introspective control and handles complex integrations via coding, internalising behaviours without multi-agent costs.

Skills act as a middle ground…semantic descriptors guide selection.

Execution policies define reasoning.

And backends enable internal or external ops.

This universal agent excels in coding-heavy tasks, consuming zero context when idle.

Broader Implications for AI Agents

Large LLMs work well in open-ended scenarios, offering flexibility where small language models (SLMs) provide speed for narrow roles.

In multi-agent setups, large models boost breadth-first queries by 90%, but SLMs rival them in specialised tasks at lower cost.

Practitioners should measure task decomposability and baseline difficulty to select architectures.

For production, focus on verification loops, domain constraints, and graceful human handoffs to avoid loops or variance in long-horizon tasks.

Actionable Guidelines

Prototype with monoliths for speed.

Deploy workflows for reliability in structured processes.

Extend with skills for modular expertise, using hierarchy to scale.

Monitor semantic overlap in libraries; group by domain to prevent cliffs.

Test compilation viability early — serialisable tasks yield the biggest efficiency wins.

This evolution positions AI Agents for real-world impact, blending efficiency with robust scaling.

As I noted in a recent post, the field favours hybrids in 2025–2026, prioritising orchestration plus specialisation over pure forms.

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.

When Single-Agent with Skills Replace Multi-Agent Systems and When They Fail

Multi-agent AI systems have proven effective for complex reasoning. These systems are compounded by specialized agents…arxiv.org

COBUS GREYLING

Where AI Meets Language | Language Models, AI Agents, Agentic Applications, Development Frameworks & Data-Centric…www.cobusgreyling.com

Three AI Agent Architectures Have Emerged

AI Agents evolved from simple chatbots to autonomous systems capable of complex, goal-oriented tasks & several distinct…cobusgreyling.medium.com

The finding that single-agent architectures reduce token usage by 54% matches what I've seen in practice. I run a persistent autonomous agent built on Claude Code, and the architecture decision between monolithic vs. multi-agent was critical. I went with a single agent that spawns specialized sub-agents for parallel tasks, which gets the best of both worlds. The skill degradation above 50-100 entries is real though. My solution was dynamic tool loading, where the agent only loads relevant skills based on context. The hybrid approach you mention as the practical path forward is exactly where production systems are landing. I wrote about the technical details of this architecture, including the self-fixing loops and memory systems: https://thoughts.jock.pl/p/ai-agent-self-extending-self-fixing-wiz-rebuild-technical-deep-dive-2026