AI Agent Memory

A new study introduces ClawVM virtual memory for LLM agents

In Short

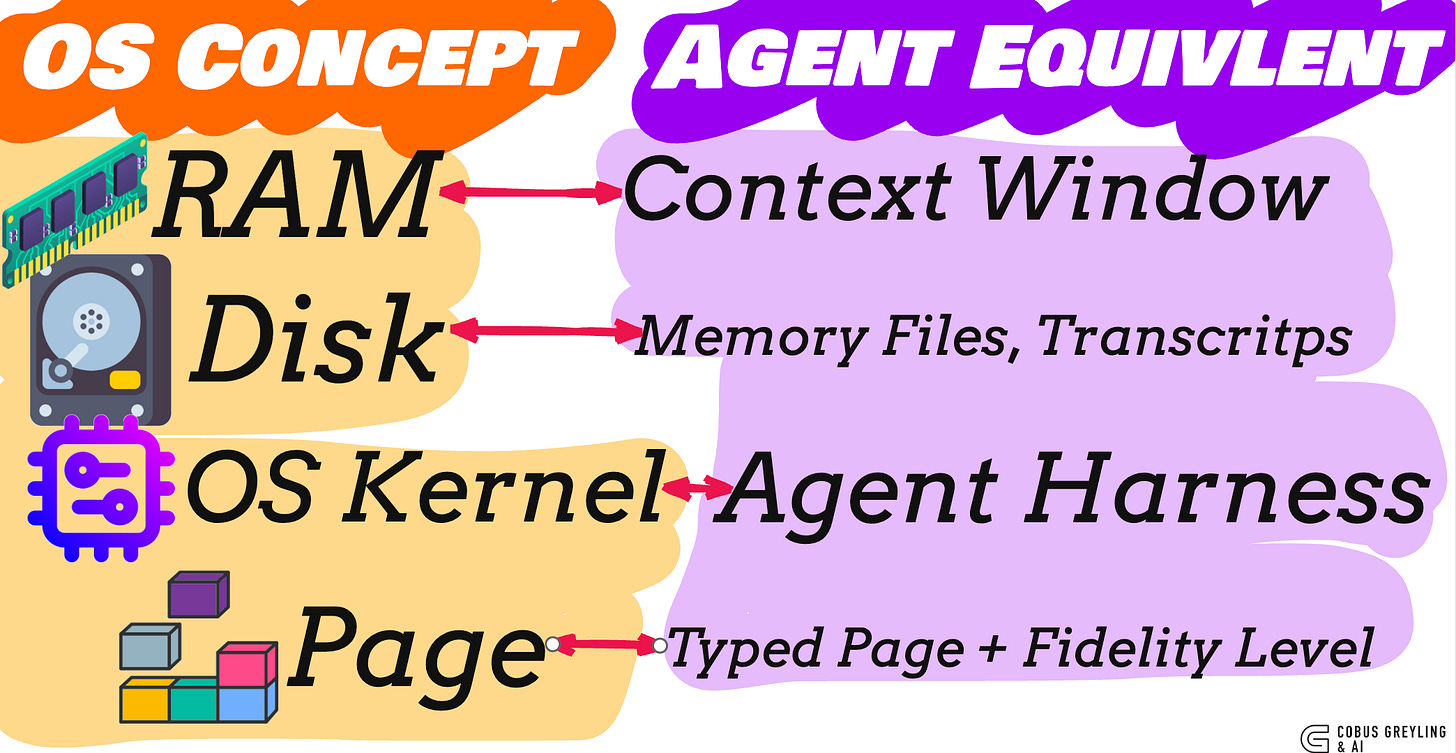

Context window is RAM.

Durable storage is disk.

The agent harness is an OS kernel that doesn’t know it’s an OS kernel.

ClawVM makes it act like one.

Zero policy-controllable faults. Less than 50 microseconds overhead per turn.

ClawVM: Harness-Managed Virtual Memory for Stateful Tool-Using LLM Agents

Whats happening

We are rapidly introducing unbounded complexity into LLM agents …

Long-running sessions, multi-step plans, tool calls, preferences and real-world consequences.

Yet our memory systems remain primitive.

A fragile context window that forgets, and scattered transcripts that offer no real traceability.

ClawVM wants to change that.

It gives agents what operating systems gave computers in the 1970s:

Context Window = RAM (fast, volatile)

Memory Files & Transcripts = Disk (durable, structured)

Agent Harness = OS Kernel (the invisible enforcer of rules, guarantees, and lifecycle)

The result is zero policy-controllable faults, deterministic degradation under pressure, and full provenance — all with <50 microseconds of overhead per turn.

In the age of autonomous agents, owning your harness is no longer optional.

It is the difference between fragile prototypes and trustworthy systems.

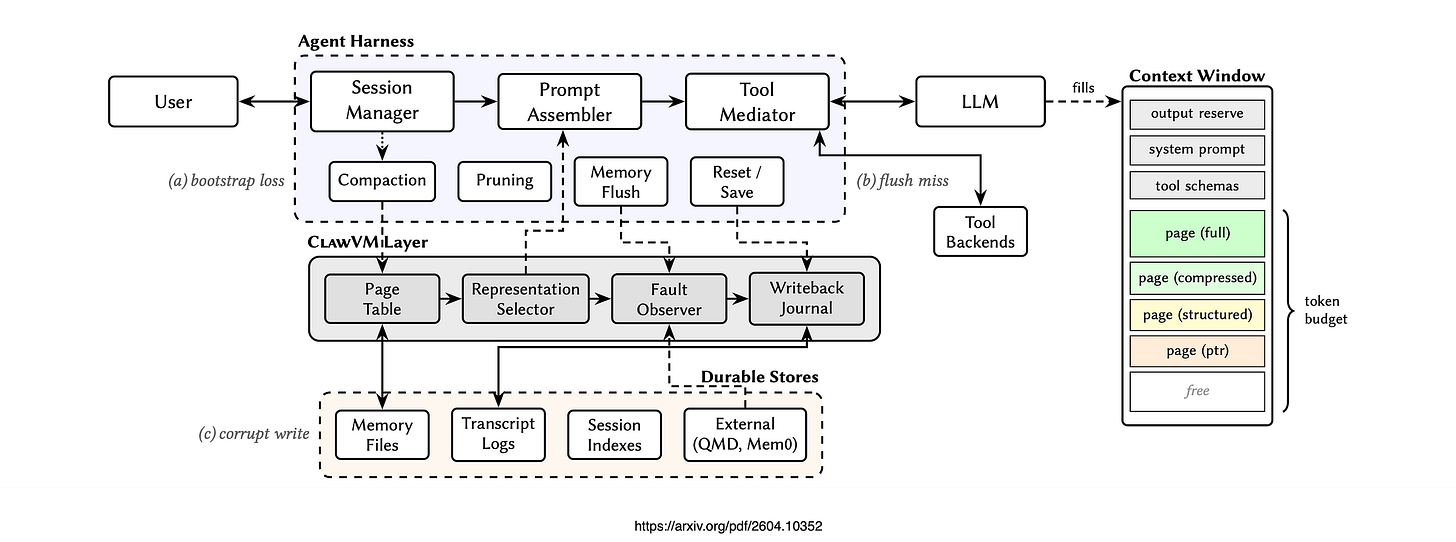

Considering the image: Architecture of a stateful tool-using agent with the ClawVM layer (shaded). The agent harness manages sessions, assembles prompts, mediates tools, and emits lifecycle events (compaction, pruning, flush, reset).

ClawVM interposes at the harness level: the page table and representation selector feed prompt assembly, the fault observer instruments paging and lifecycle behaviour, and the write-back journal enforces validated, non-destructive persistence at all lifecycle boundaries.

The Problem

You’re 40 turns into a session.

The agent has your preferences loaded, a multi-step plan in progress, results from a dozen tool calls.

Compaction takes place.

The summary keeps the high-level goal but drops the plan’s current step, your routing rules, and the evidence that conflicts were already resolved.

The Fix

Operating systems solved this in the 1970s.

The harness already assembles prompts, mediates tools, and observes lifecycle events. It is the enforcement point. ClawVM makes it enforce.

How It Works

Typed pages, all agent state becomes pages with an identity, a scope (session or project), a provenance (which tool call produced it), and a minimum-fidelity invariant.

Six types:

Bootstrap (system instructions),

Constraint (hard rules),

Plan (goal + step),

Preference (user settings),

Evidence (tool outputs),

Conversation (transcript spans).

Four fidelity levels…full, compressed, structured, pointer.

Under budget pressure, pages degrade along this chain instead of being summarised or dropped.

Constraints never drop below structured.

Evidence pointers must remain resolvable.

All representations are pre-computed at page creation — not generated under pressure.

Validated writeback, at every lifecycle boundary (compaction, reset, session end), the harness commits dirty state through a three-phase transaction:

stage typed updates,

validate against schema and

provenance, commit via merge rules.

Destructive overwrites are rejected with reason codes.

Prompt assembly is a knapsack problem.

Phase 1 installs all hard-pinned pages.

Phase 2 upgrades remaining pages (pointer to structured to compressed to full) by marginal utility per token until the budget is spent.

Putting it into practice

If you’re building or extending an agent harness…

Your context window is a memory tier.

Every prompt assembly decision, what to include, at what fidelity, what to drop, is a page replacement decision.

Design eviction policies, not summarisation heuristics.

Pre-compute representations at ingest time.

When budget pressure hits, the decision should be a table lookup, not an LLM call.

Generate full, compressed, structured and pointer variants when a page is first created.

Degrade deterministically.

Commit dirty state at every lifecycle boundary.

Compaction, reset, session end, if the runtime is about to destroy the only copy of state, write it back first.

This is the single gap that no amount of retrieval quality can close.

Make state loss observable.

Emit reason codes.

If you can’t tell why state is missing, you can’t fix the policy that lost it.

The context window is RAM.

The harness is the kernel.

Chief AI Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. From Language Models, AI Agents to Agentic Applications, Development Frameworks & Data-Centric Productivity Tools, I share insights and ideas on how these technologies are shaping the future.

ClawVM: Harness-Managed Virtual Memory for Stateful Tool-Using LLM Agents

Stateful tool-using LLM agents treat the context window as working memory, yet today’s agent harnesses manage residency…arxiv.org

Good job