Context Engineering 2.0

How can machines better understand our situations and purposes?

All of sudden it seems like everyone is speaking about context in general.

And context engineering in specific.

And its being hailed as some breakthrough in AI development...

Yet this emphasis on context isn’t new — it’s a refinement of principles that have shaped computing for decades.

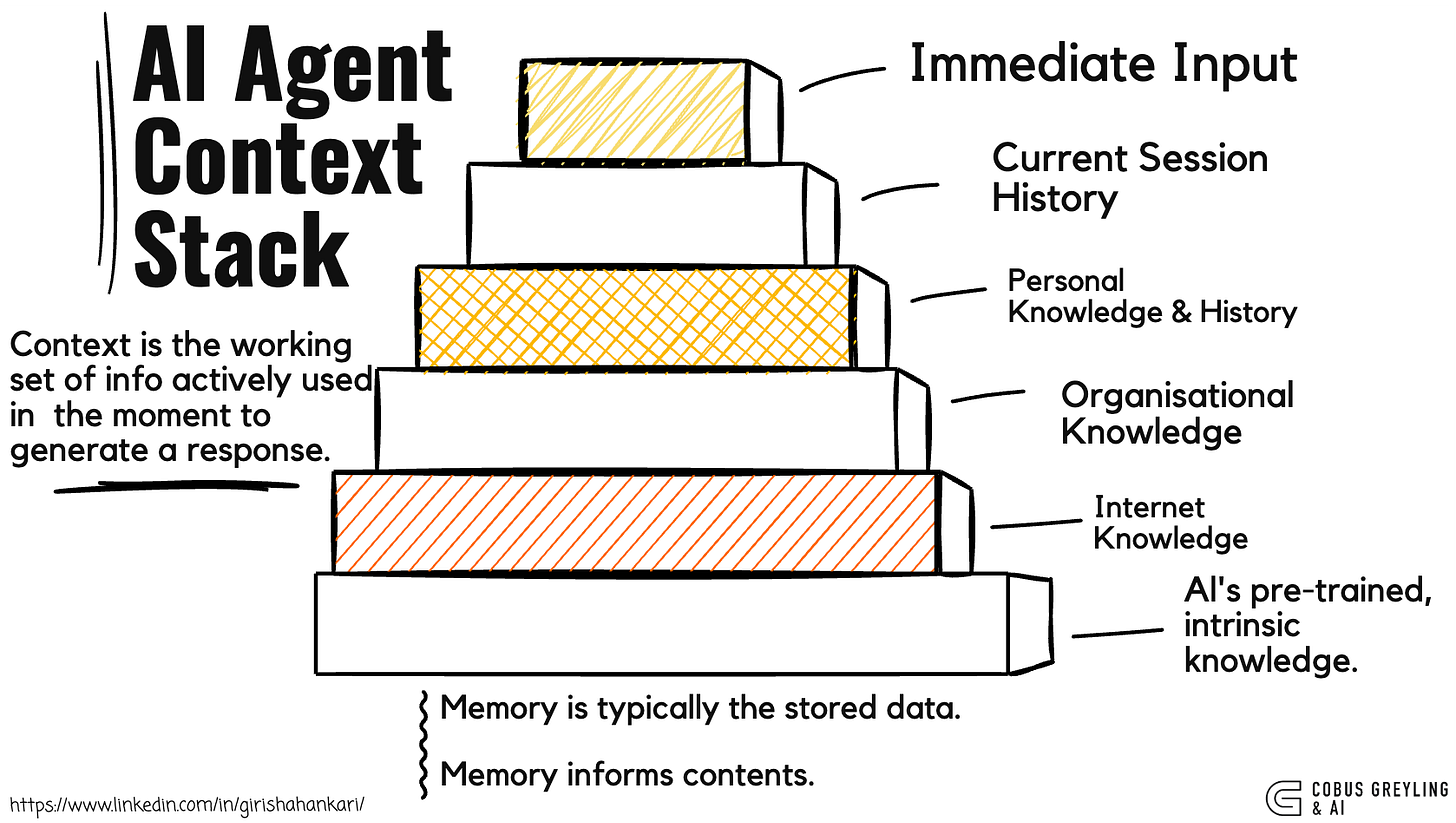

At its core, context emerges from memory, which is stored data and selectively retrieved to frame understanding.

The challenge lies in pulling and piecing together the precise fragments of memory that sharpens the contextual picture.

How can machines better understand our situations and purposes?

Fundamentals Autonomous AI Agents

I finally understand the fundamentals of building real AI agents — and it’s simpler (and more profound) than I thought…cobusgreyling.medium.com

And in turn directly fuel intelligence, or from a human perspective, perceived intelligence .

By the way, we as humans excel at this intuitively.

Without it, systems falter in ambiguity.

In conversation, we compress information by leaning on shared history, tone and surroundings to control entropy — the uncertainty in a message.

A glance or a pause conveys volumes (something some refer to as face speed), allowing listeners to infer the unsaid.

Machines, by contrast, demand explicit inputs.

They process words literally, blind to subtext unless fed the right scaffolding.

This gap underscores why context as a discipline is important, it bridges raw data to meaning, turning mechanical responses into something closer to comprehension.

Though often tied to chatbots’ prompt histories or agent tools, context spans far wider.

It’s been engineered for over two decades, accelerating with mobile tech in the early 2000s.

With the advent of mobile, all data was suddenly underpinned by location.

Hence adding a contextual dimension to data.

Social layers added to this, followers, networks, timelines, all layering contextual density.

The contextual references from mobile devices allowed mobile to anticipate needs, from route suggestions to news feeds and more.

A recent study on memory retrieval underscored this link, spotlighting how context and recall intertwine.

It catalogs triggers like location, identity, activity, time, environment and device state.

Hence everyday anchors that humans use without thinking.

In machines, memory serves as the vault for this data, not just archiving but reactivating it to decode unstructured inputs.

Stored as structured records, it lets systems parse intent in unstructured settings.

The timing feels urgent because machine cognition is accelerating.

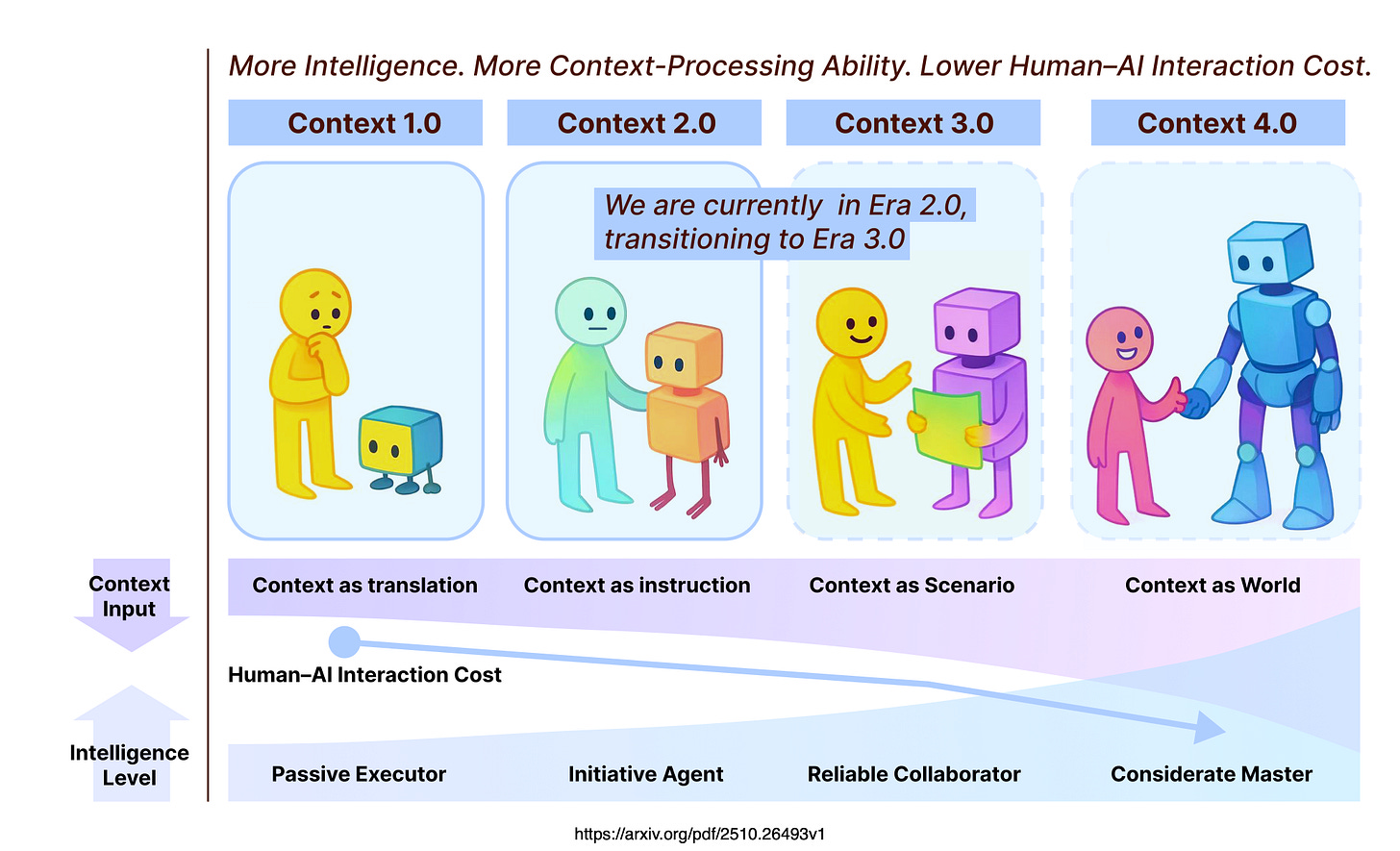

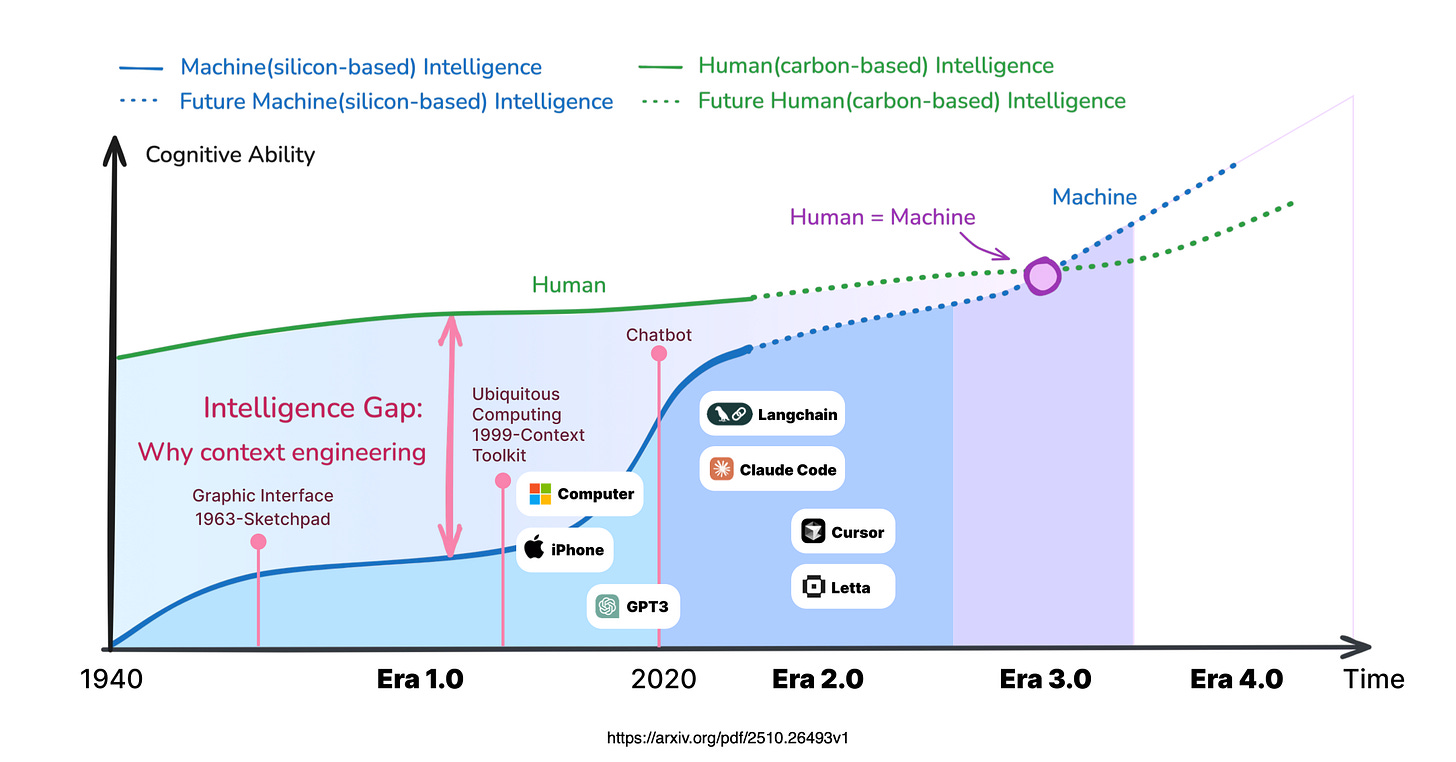

A framework from the study maps this progression across four eras, charting how context unlocks deeper capabilities.

In Era 1.0 — Primitive Computation, from the 1990s to 2020 — machines managed narrow, predefined inputs. Think dropdown menus or basic sensors.

Interactions hinged on exact, translated contexts, with no room for interpretation. Intelligence stayed surface-level, confined to scripted paths.

Era 2.0 — Agent-Centric Intelligence, unfolding since 2020 — marks the shift.

Looking ahead, Era 3.0 — Human-Level Intelligence — promises parity.

Era 4.0 — Superhuman Intelligence — remains speculative but transformative.

This trajectory hinges on mastering memory’s retrieval — the art of surfacing the right context at the right moment.

As systems climb the eras, intelligence doesn’t just grow, it compounds, turning isolated data points into foresight.

The rage around context engineering reflects this momentum, it’s not hype, but the lever pulling AI forward.

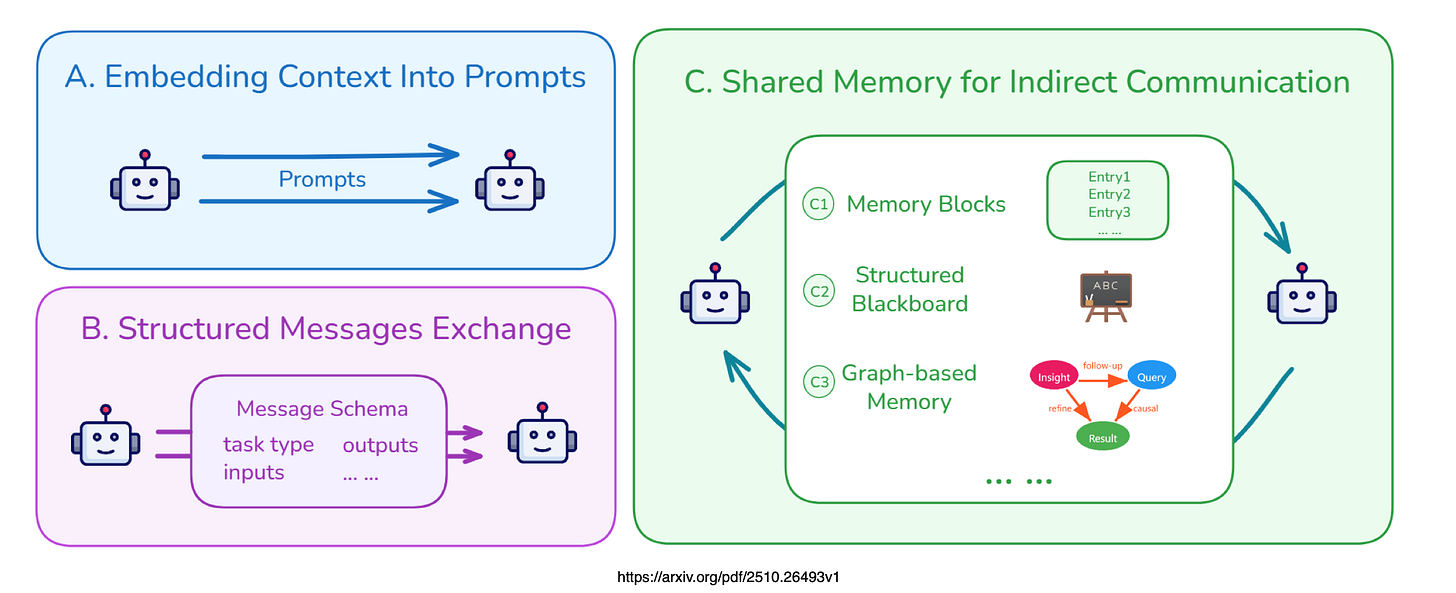

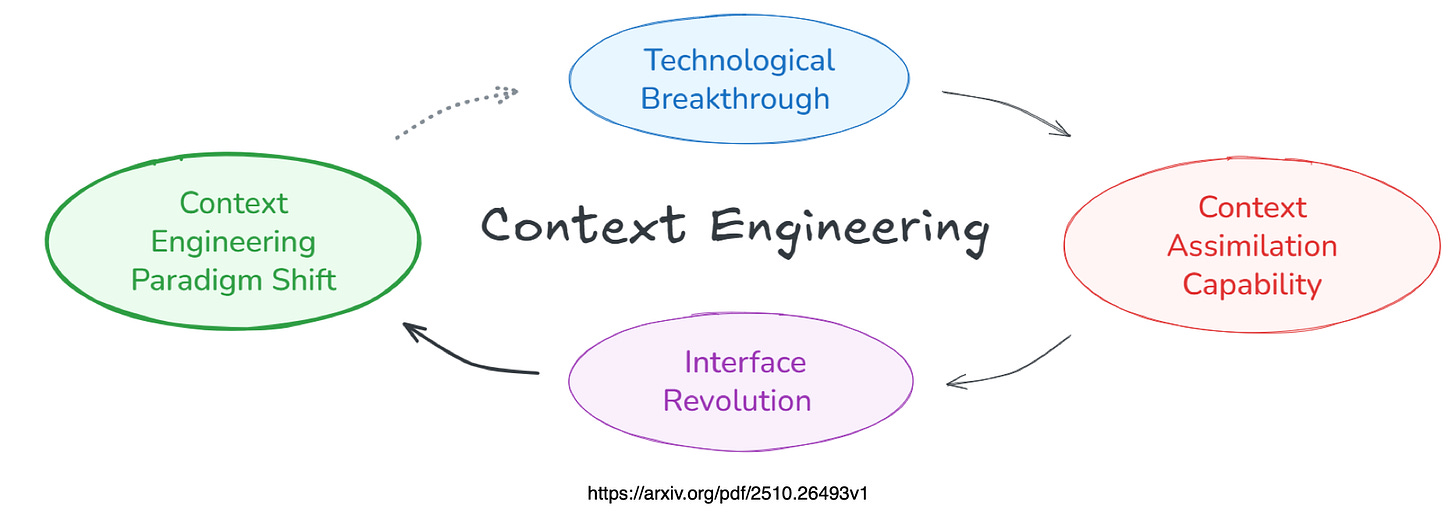

Lastly, considering the image below, there is a cycle….technological breakthroughs increases context assimilation capacity.

Interfaces evolve and this brings about paradigm shifts in context engineering.

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.