Developer Pain Points In Building AI Agents

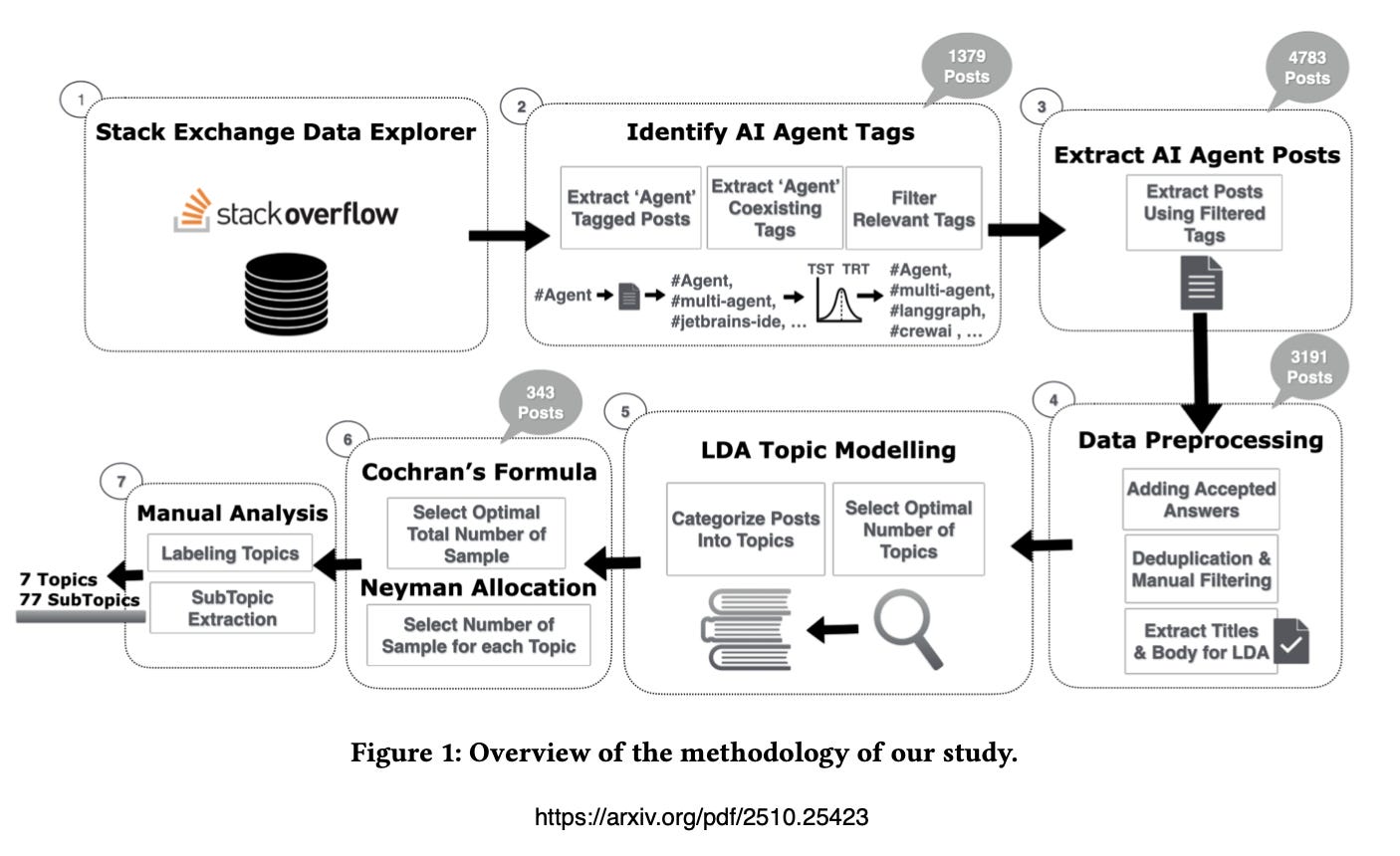

A dataset of 3,191 unique questions & accepted answers on building AI Agents from Stack Overflow was used to understand the realities of developing and implementing AI Agents.

OK, I loved reading this study, because it really paints a picture of the current situation we find ourselves in…

Developers face persistent & often under-explored challenges when building, deploying & maintaining with emerging systems.

Two things are really fuelling the AI Agent / Agentic AI hype cycle…

Marketing and AI influencers. All the while Sales is trying to figure out how to package and communicate all of this to their prospects.

And then there is the research. With most research current being performed not by academic institutions, but commercial entities. This in itself is not a problem, as companies like Salesforce has made significant contributions to the open-source community. But it is a factor to keep in mind in terms of a type of influence on the market and general perception.

In a recent interview Andrej Karpathy spoke about how easy it is to create very impressive demos in a short period of time…but the leap to production is significant.

Then, you have the implementation part, which can be described as the dark underbelly of the AI Agent world, and not receiving the attention it should.

Things like orchestration, AI Agent control planes and the everything just works sales pitch ends up in the lap of those that needs to make it work.

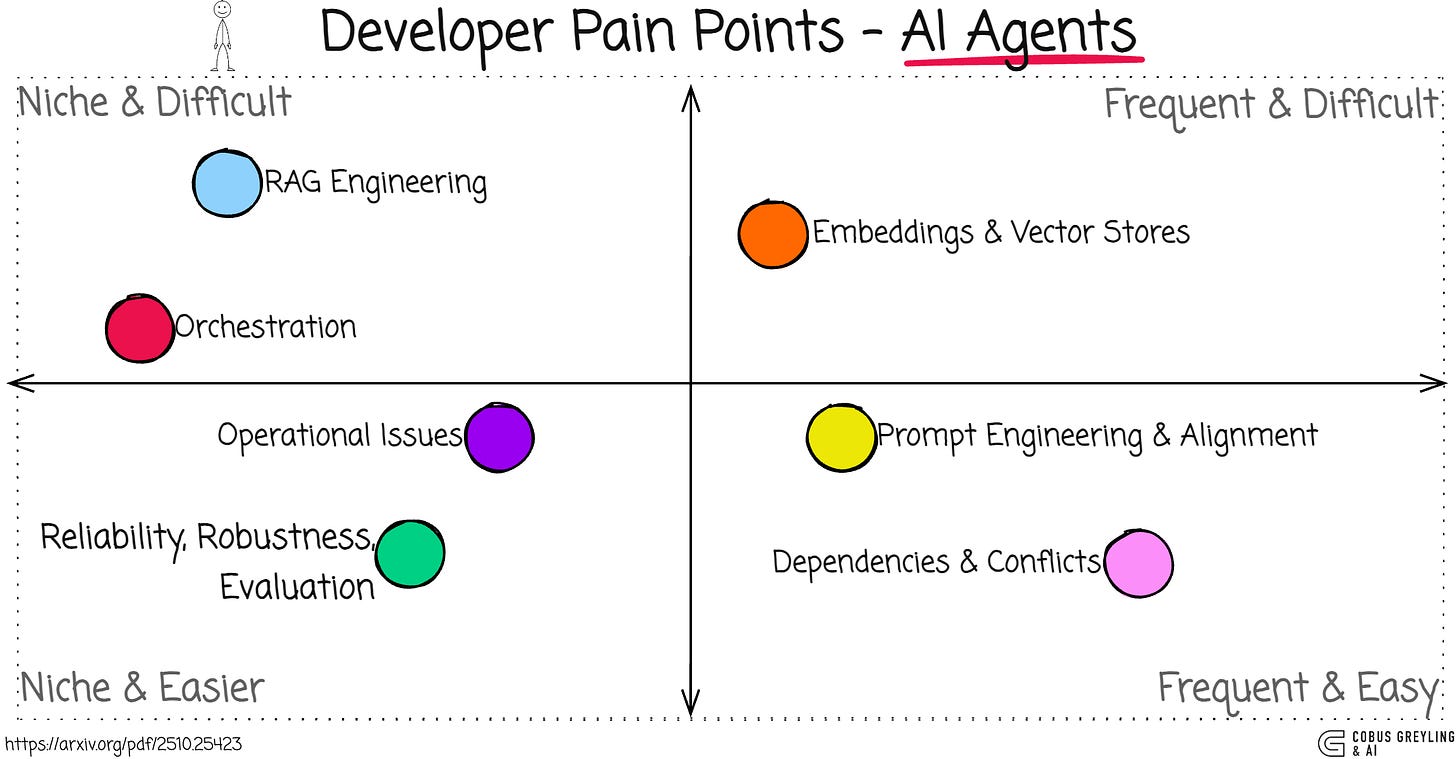

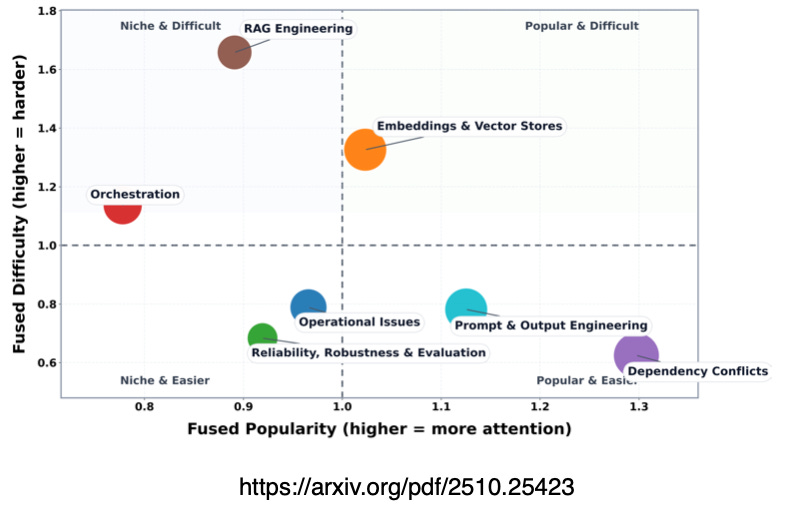

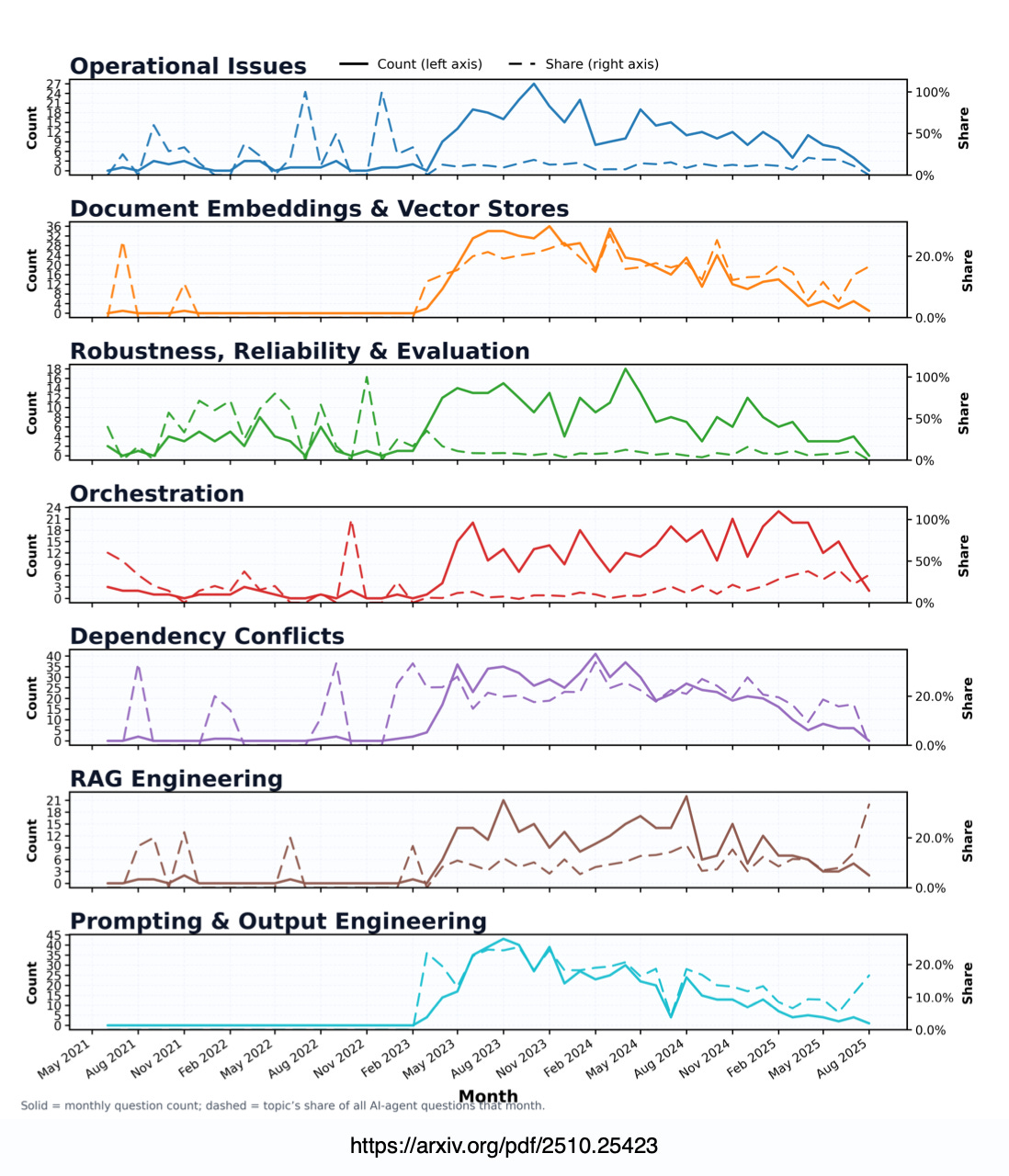

Looking again at the quadrants below, it is clear that the frequent technologies (those developers make use most often) are embeddings, vector stores.

So this has become a common and widely used approach for context engineering. This falls under common and hard problems.

Common and easier problems are prompt engineering and alignment. There has been work done on creating evaluation flywheel architectures.

Dependencies and conflicts is a foundational challenge, installing frameworks and software.

Thinking of operational challenges, top challenge is Tool-Use Coordination Policies (23%), which is related to configuring when and how agents invoke tools, including disabling or sequencing parallel use to avoid conflicts,

Suffice to say the detail matters and trip up developers…

Just a word on Stack Overflow…

Pre-2021 Stack Overflow peaked at 500k + monthly questions, and the reality is that from 202 onwards, it apparently closer to 14k to 20k per month.

The shift is because of AI.

There has been mentions of toxicity on Stack Overflow hurting new users, and hence AI removes that impediment with novice devs rather asking AI

But I think SO has the same niche value that Reddit has, in terms of human-made content that relates to what is happening in the real world.

Hence not creating content for the sake of content, if that makes sense.

So, based on the study analysing 3,191 Stack Overflow posts (from 2021–2025), developers encounter a diverse set of issues when building, deploying and maintaining AI Agents.

The research identified seven major challenge areas…

Operations (Runtime & Integration)

Document Embeddings & Vector Stores

Robustness, Reliability & Evaluation

Orchestration

Installation & Dependency Conflicts

RAG Engineering

Prompt & Output Engineering

These reflect real-world pain like integration hurdles, framework instability and evaluation gaps.

The most prevalent challenges highlight where developers spend the most time asking questions.

Installation & Dependency Conflicts tops the list at 21% — a frequent but often resolvable issue tied to rapid ecosystem churn.

Rapid ecosystem churn stunts development

In contrast, harder-to-solve problems like RAG Engineering (10%) and Orchestration (13%) persist despite lower volume, showing under-supported complexity.

Orchestration in AI Agent systems refers to the control plane that orchestrates an AI Agent’s capabilities:

Perception,

Memory,

Planning and

Action — into reliable, end-to-end workflows.

It’s essentially the processes that:

Decomposes tasks,

Routes them,

Synchronises execution,

Manages state across channels and,

Invokes tools.

This applies to both single-agent pipelines and multi-agent setups.

I can imagine orchestration is tricky…AI Agents aren’t linear scripts — they’re dynamic graphs often with parallel tool calls and multi-agent interactions (in an Agentic Workflow).

Lastly, the study also notes that developers face significant challenges in RAG engineering for AI agents.

Including ingestion and processing of diverse documents like PDFs and images, scaling for concurrency and throughput.

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.

What Challenges Do Developers Face in AI Agent Systems? An Empirical Study on Stack Overflow

AI agents have rapidly gained popularity across research and industry as systems that extend large language models with…arxiv.org