Developer Practices When Developing AI Agents

There is a gap between what is being sold and what developers are experiencing and implementing on the ground…

If there is something that we have learned the last couple of months, is that there is a disconnect between AI Agent / Agentic hype and what is being experienced on the ground.

The MIT report taught us all a new term, the AI Shadow Economy…which can be best described as the true AI economy in terms of use, preference and pervasiveness.

But an economy which is not reported on, and often does not align with the official narrative and official AI tools supplied by organisations.

This is something a16z recently alluded to, attribution. How to do attribute AI success, cost savings and more, accurately.

Workers are using AI, they know what good AI looks like. Just their own AI, not the tools supplied via official avenues.

Canaries in the coal mine is also an interesting study where the impact of AI on the ground was being gauged. Early and weak signals, but indicators none the less.

Another example, of research and studies trying to get close the the coalface in an attempt to glean what is really happening, looked at thousands of developer queries and questions on Stack Overflow…

Yes, I know, you are thinking…are devs still using SO? The usage has dropped, but they still do use it.

And what was interesting is what the study mapped out in terms of developer struggles. The top developer pain points included dealing with platform churn and changes. Also dealing with dependancies and conflicts when installing those.

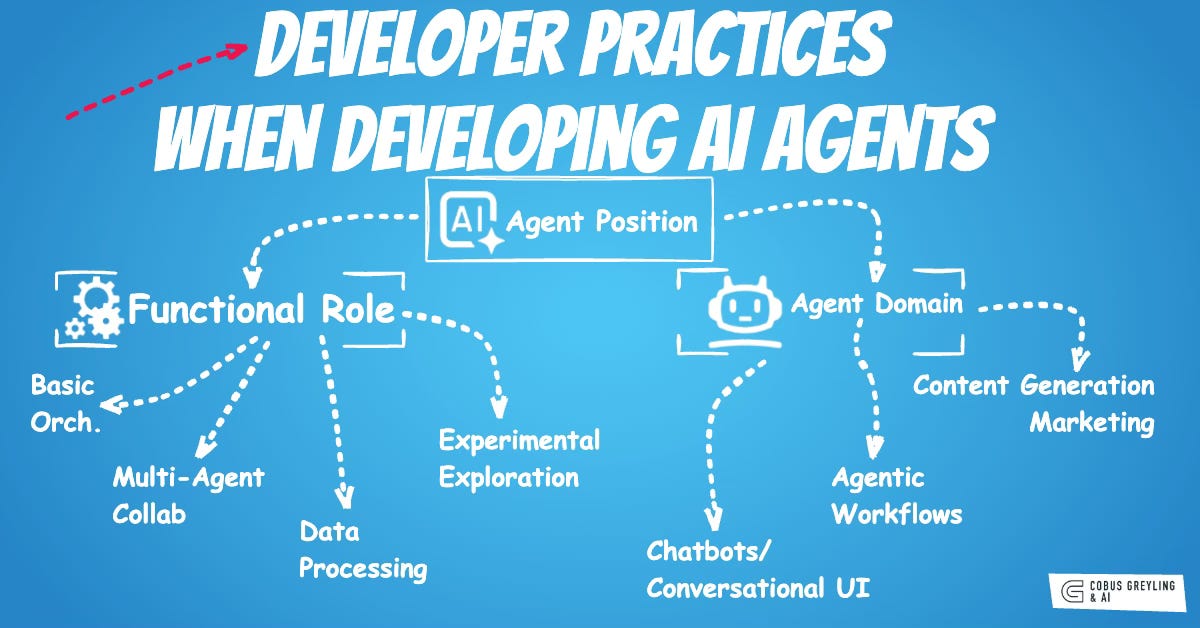

So, this latest study looked at 13 key agent developer practices.

The practices are derived from the analysis of 1,575 GitHub repositories and 8,710+ developer discussions, focusing on adoption and utilisation trends.

Encompassing functional roles, usage patterns and lessons from challenges

Highlighting how developers effectively adopt, combine, and optimise LLM-based agent frameworks like LangChain, AutoGen, and others

Prioritising Ecosystem Robustness

Developers prioritise agent frameworks with robust ecosystems, characterised by comprehensive documentation, active community forums and a wide array of integrations and extensions.

The study shows that frameworks like LangChain, with over 6,000 discussions, benefit from this robustness, enabling easier discovery of solutions to common issues.

The points below are also good indicators on what framework suppliers need to focus on in order to improve developer adoption…

Emphasising Long-Term Maintenance Activity

Rather than chasing short-term hype measured by GitHub stars, developers evaluate frameworks based on consistent update frequency, rapid issue resolution and sustained contributor engagement.

Analysis of the 10 top frameworks shows that actively maintained ones like AutoGen and LangGraph exhibit lower abandonment rates, with regular patches addressing challenges like version conflicts (affecting 23% of projects).

Assessing Practical Adoption in Production Projects

Developers scrutinise a framework’s real-world deployment by examining dependency graphs, case studies, and used bycounts across diverse domains like finance and software development.

The study’s co-occurrence analysis indicates that frameworks proven in production, such as LlamaIndex for RAG applications, outperform hype-driven ones, helping avoid pitfalls like untested scalability. This practice aligns framework selection with specific project needs, fostering reliable agent deployment.

Combining Multiple Complementary Frameworks

The dominant usage pattern is integrating multiple frameworks to leverage their strengths, such as pairing LangChain’s orchestration with AutoGen’s multi-agent dialogues and LlamaIndex’s data retrieval.

From 8,710 discussions, over 60% of projects employ hybrid setups, mitigating single-framework limitations like poor state management.

Developers orchestrate these via dependency injection, enabling modular agent architectures that enhance flexibility across SDLC stages.

Orchestrating Components with Sequential Chains

Developers use LangChain’s modular chains to simplify LLM integration, sequencing prompts, models and tools for basic agent workflows like chatbots.

This practice accelerates prototyping by abstracting repetitive boilerplate, as seen in 40% of discussions, but requires careful loop handling to avoid logic failures (25.6% of challenges).

It lowers the entry barrier for beginners while supporting rapid iteration in implementation phases.

Enabling Multi-Agent Collaboration via Conversational Paradigms

Developers implement AutoGen’s conversation-as-computation to simulate collaborative problem-solving among agents through dynamic dialogues.

This practice excels in task decomposition and delegation, for rapid prototyping and is used in 20% of multi-agent projects.

It fosters emergent behaviours but demands monitoring for performance bottlenecks like context loss (25% of challenges).

Augmenting LLMs with Retrieval-Augmented Generation

Developers integrate LlamaIndex to connect agents with external knowledge bases via RAG, grounding responses in retrieved data for accuracy in data-heavy tasks like recruitment.

This practice mitigates hallucination risks and supports memory management, addressing 25% performance challenges.

High discussion volume (1,691 threads) underscores its role in data processing, with emphasis on efficient indexing for optimisation.

Implementing Self-Improving Task Loops (BabyAGI)

For single-agent autonomy, developers deploy BabyAGI’s iterative loop for task generation, prioritisation, and execution, enabling adaptive planning.

This experimental practice is ideal for exploratory domains like game development, fostering self-improvement without multi-agent overhead.

Findings highlight its simplicity but warn of performance inefficiencies, recommending hybrid use with optimisation focused frameworks.

Leveraging Modular Plugins for Enterprise Integration

Developers adopt Semantic Kernel’s plugin-based architecture to embed agents into enterprise ecosystems, prioritising extensibility and interoperability.

This practice enhances maintainability for production systems, reducing update costs amid version challenges. The study shows its strength in software engineering domains, with recommendations for semantic memory plugins to handle dialogue history loss.

These practices provide actionable guidance for agent developers, framework designers, and stakeholders, directly informed by the study’s taxonomy of challenges and five-dimensional evaluation.

They emphasise hybrid, ecosystem-driven approaches to overcome SDLC pain points like logic failures and performance issues.

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.

Developer Pain Points In Building AI Agents

A dataset of 3,191 unique questions & accepted answers on building AI Agents from Stack Overflow was used to understand…cobusgreyling.medium.com

The Shadow AI Economy

The biggest revelation from the recent MIT report on business adoption of AI is that there is a massive failure in…cobusgreyling.medium.com

Canaries In The AI Coal Mine

What are some early signs of AI’s impact on the workplace?cobusgreyling.medium.com

An Empirical Study of Agent Developer Practices in AI Agent Frameworks

The rise of large language models (LLMs) has sparked a surge of interest in agents, leading to the rapid growth of…arxiv.org

COBUS GREYLING

Where AI Meets Language | Language Models, AI Agents, Agentic Applications, Development Frameworks & Data-Centric…www.cobusgreyling.com