Five Levels Of AI Agents

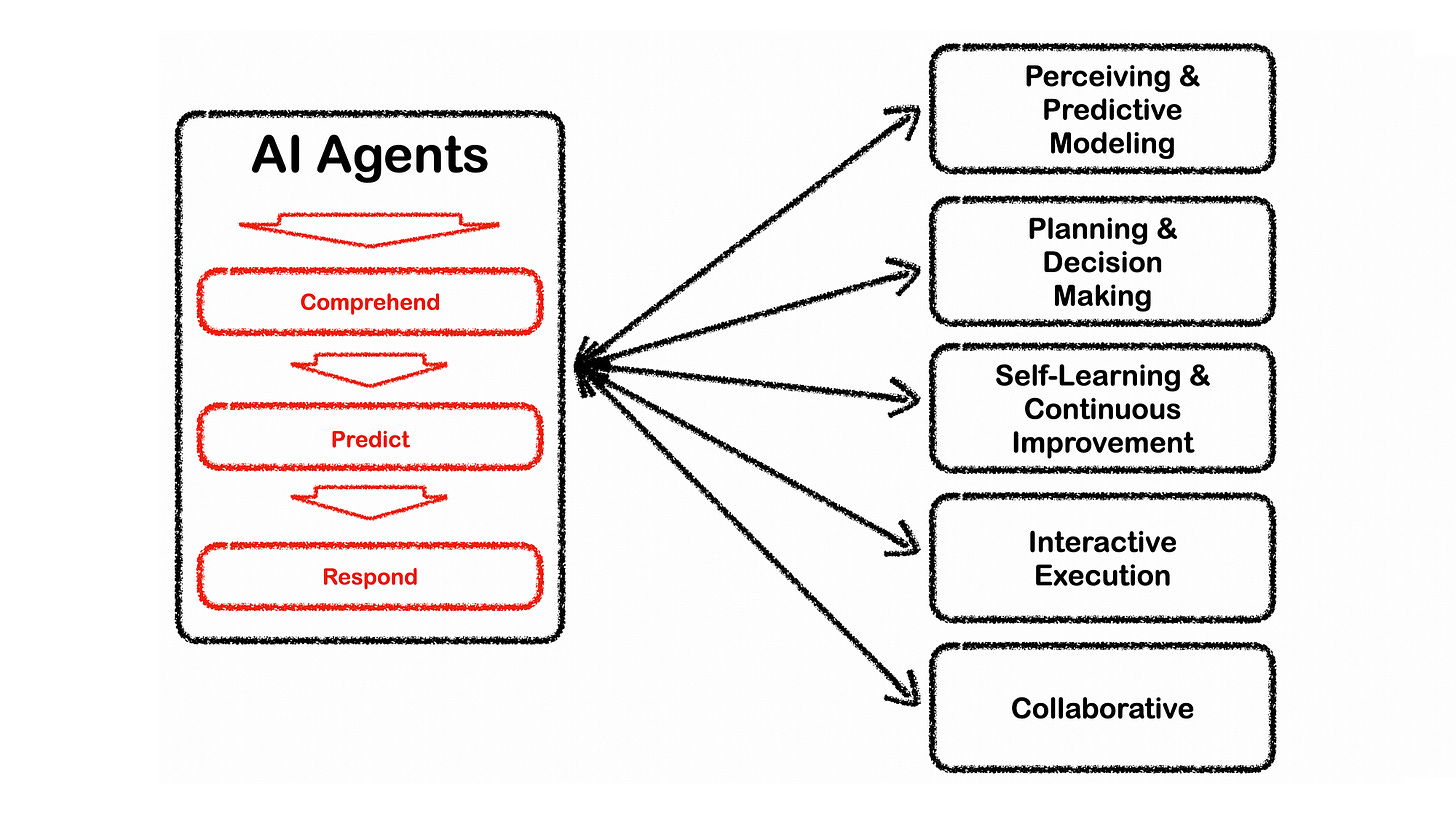

AI Agents are defined as artificial entities which can perceive their environment, make decisions and take actions based on tools available.

Introduction

This is a topic I really enjoyed researching and I was looking forward to writing this. Mostly because I wanted to demystify the idea of agents and what exactly constitutes an agent.

Together I wanted to create a clear delineation between domain specific implementations and wide, general implementations which are referred to as AGI.

Considering domain specific implementations, this is the area that interests me the most, this category demands consideration of the build tools available, cost, latency and practical and commercial implications. All of which I try and cover in this article.

Domain Considerations

There has been much hype, fear mongering and speculation on AGI or Artificial Super Intelligence (ASI) and what organisations are cooking up. This is not my prime interest, but rather how to harness the power of LLMs and Autonomous AI Agents for domain specific implementations in organisations.

Big commercial driver of Conversational UIs are companies in banking, retail, financial services and more, creating AI-based UIs for users to interact with regarding products and services.

Any entity, that is able to perceive its environment and execute actions, can be regarded as an agent.

Where Are We Currently?

Considering narrow domain implementations, we currently find ourselves at level two and three; most probably at level 2.5.

LangChain has lead the charge in creating frameworks for the development of Agents. DSPy in programming LLMs and LLamaIndex with their agentic RAG approach.

These agents sit at between 50% and 90% of skilled adults, with strategic task automation capabilities. Based on user input, the agents can decompose the user description, plan sub-tasks and execute those tasks in an ordered fashion to reach a conclusion.

These agents are capable of iterating over intermediate sub-tasks until a conclusive answer is reached.

Practical Example

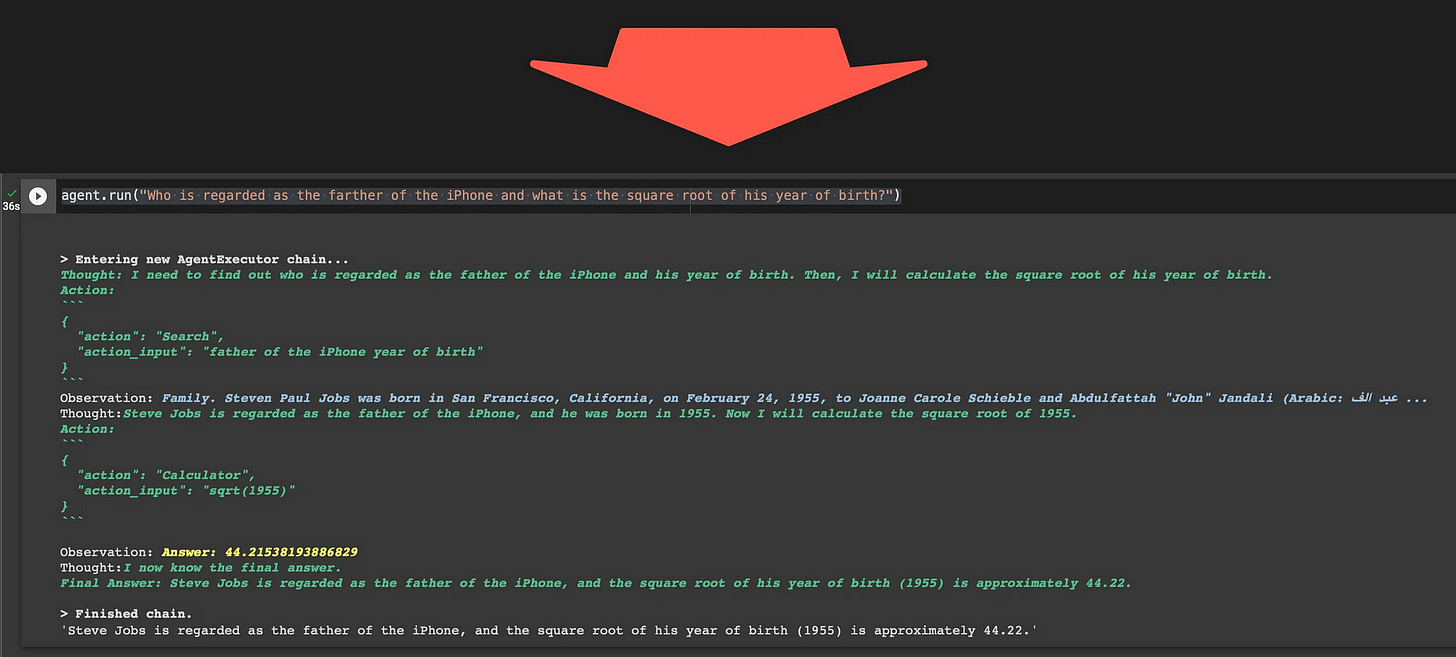

Considering the following question?

Who is regarded as the father of the iPhone and what is the square root of his year of birth?

This is quite an ambiguous and complex question to answer and demands a few steps which needs to be followed to reach an answer. There is a mathematics task and the end, but also knowledge needs to be retrieved to answer the question.

For this practical example, the agent has a few actions at its disposal:

SerpApi, below is a screenshot of the SerpApi website. SerpApi makes data extraction from search engine results actionable.

GPT-4 (

gpt-4–0314).

Below, consider this LangChain based agent’s output and notice how the agent goes from thought, to action, to observation in a sequential fashion until it reaches a final answer and the chain is finished.

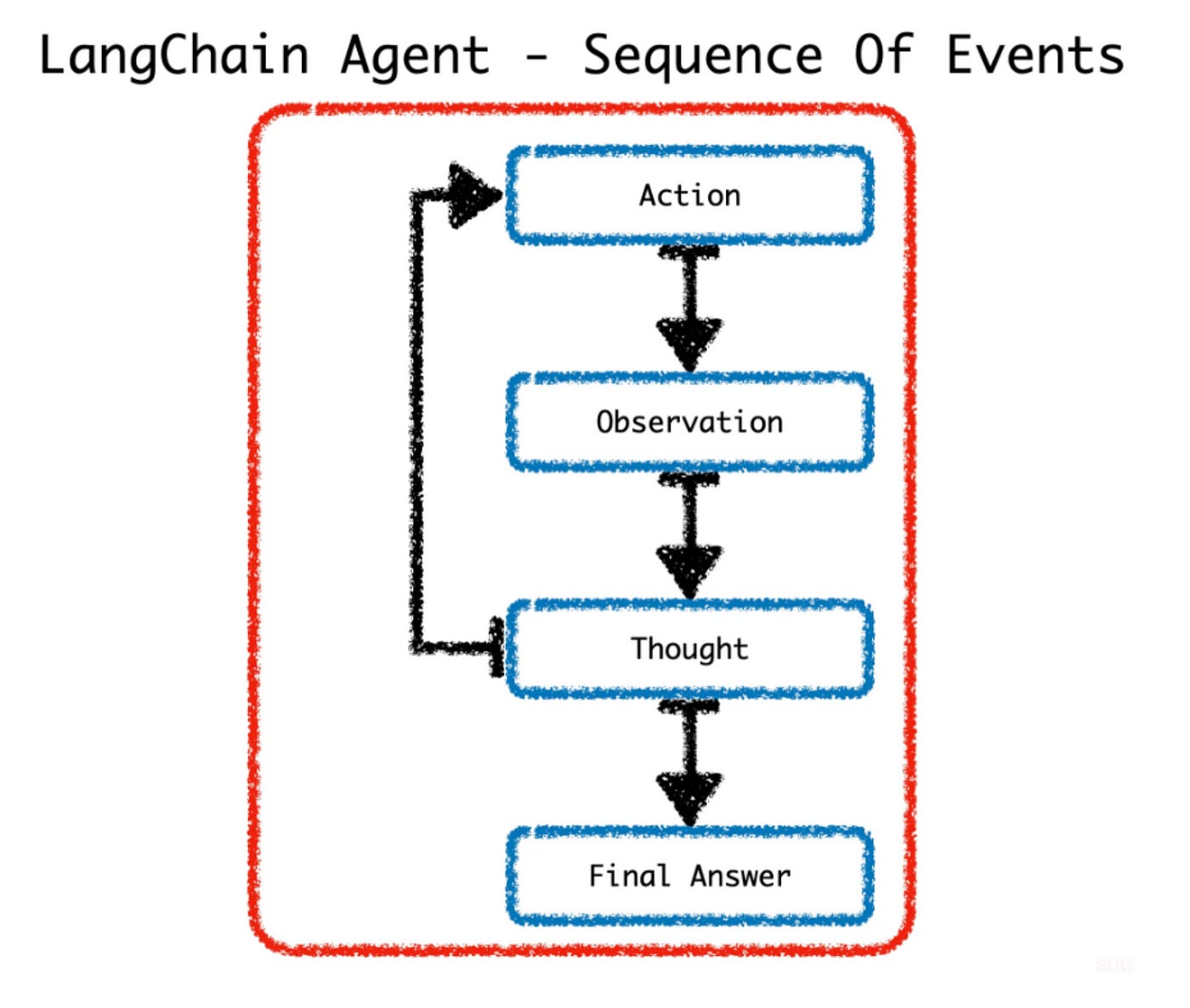

Below is a graphic depiction of the sequence of events of the agent.

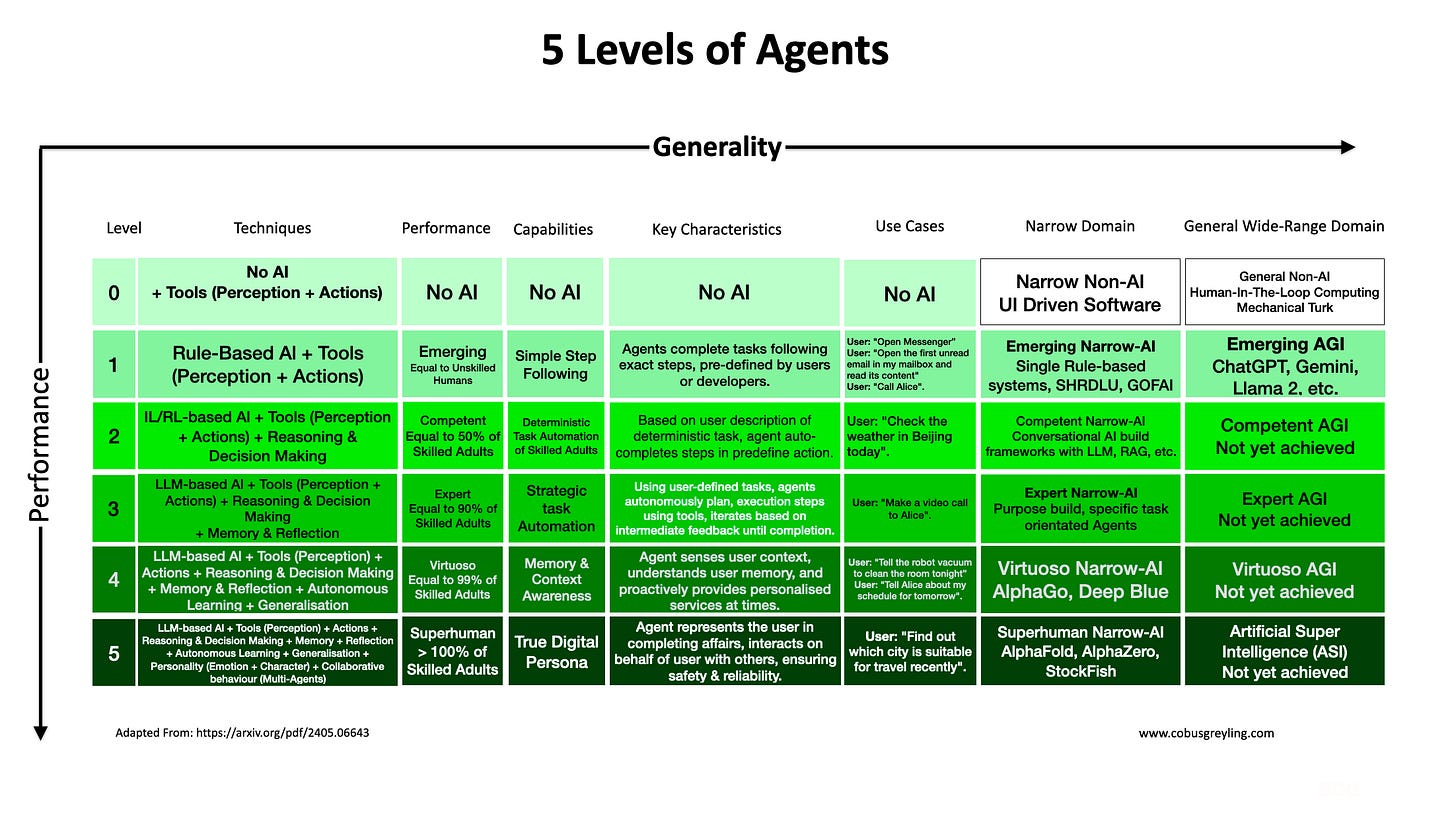

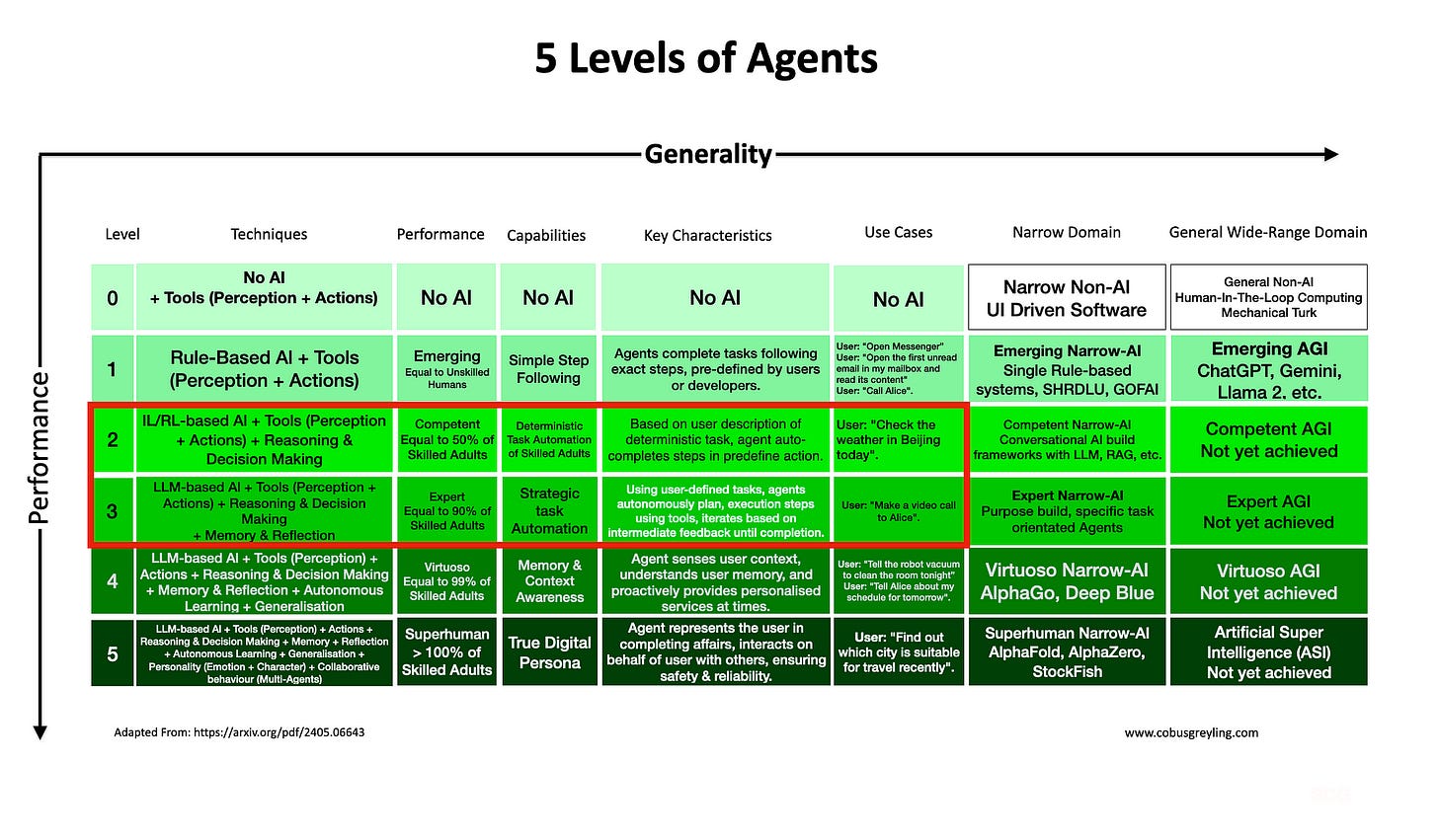

In the table showing the five levels of agents, you will notice level one agents are rule based…rule-based agents can have some autonomy, but in practice, they consist of predefined steps which are executed based on pre-defined steps.

The image below shows a more rule based approach to building agents, with Generative AI nodes. Later in this article I will delve deeper into why rule-based automation with measured autonomy is an astute approach to enterprise implementations. As apposed to a complete autonomous agent approach.

Basic Structure of Narrow Domain Agents

Agents has at its backbone a Large Language Models (LLM). Agents also have access to a number of tools. Tools can have specific capabilities, like web search, specific APIs, RAG, mathematics and more.

Tools are described in natural language in order for the agent to know which tool to make use of at a specific stage in the process. The number of tools and capabilities of tools determine how powerful the agent is.

Practical Considerations

Again considering narrow-domain implementations of Agents, there are a few practical considerations to keep in mind.

Sensory

Most agents currently are virtual, and are accessed via voice or text input. These agents can reason and reach conclusions and then in turn respond in voice or text. Multimodal elements can be added where agents can receive image or video as input, or generate images or video as output.

However, agents do not in general have other sensory capabilities like vision, touch, movement etc. With all the development in terms of robotics, combining agents with sensory / physical ability will usher in a new era.

LLM Backbone

As I have mentioned earlier, the agent has as its backbone an LLM, or more specific an LLM API which is called. Agents go through multiple iterations and API calls. There is a single dependancy which needs to be catered for hence I would argue for any production agent implementation redundancy will have to be built into the agent backbone.

Self-hosted LLMs or local inference servers are the optimal way to ensure uptime.

Cost

Making use of commercial LLM APIs will be very costly, considering that for each question posed to the agent the LLM is queried multiple times.

Imagine thousands of users will just compound the cost problem.

Latency

Conversational systems demand sub-second responses, any complex system, like agents which need to perform multiple steps internally for each dialog turn adds up to the total latency experienced by the user.

This can become a challenge to overcome.

Not Reaching Conclusion

It is important to note that currently there are instances where the agent does not reach a conclusion, or reaches a conclusion prematurely. If the user can have access and a view into the reasoning steps of the agent, the user’s query might be satisfied by intermediate steps in the agent’s reasoning. Where the user can stop the agent and inform it that enough information has been given.

Tools & Cost

Agents need to have access to tools in order to perform their tasks. There can be a whole marketplace where tools can be created at shared. Where makers need not create tools from scratch, but select an existing tool.

These tools can be for free, or charged; tools may access APIs which are charged for.

The Term Agents

As AI has progressed, the term agent is employed to describe entities that demonstrate intelligent behaviour and possess abilities such as:

autonomy,

reactivity,

pro-activeness, and

social interactions.

In the 1950s, Alan Turing introduced the iconic Turing Test, a pivotal concept in AI designed to investigate whether machines can exhibit intelligent behaviour akin to humans. These AI entities are commonly referred to as agents, laying the foundational components of AI systems.

Transfer Learning

Transfer learning involves leveraging the knowledge acquired from one task and applying it to another task.

Foundation models typically adhere to this approach, where a model is initially trained on a related task and subsequently fine-tuned for the specific downstream task of interest.

Transfer learning is a powerful concept and adds to the versatility of models, where never before seen tasks can be performed based on past learning.

Conclusion

Somehow it feels to me that it is currently overlooked, but Autonomous AI Agents represent a pivotal advancement in technology.

Agents, equipped with artificial intelligence, have the capacity to:

Operate independently,

Make decisions &

Take actions without constant human intervention.

In the future, autonomous AI agents are set to revolutionise industries ranging from healthcare and finance to manufacturing and transportation.

There are however considerations regarding accountability, transparency, ethics, responsibility and bias in decision-making.

Despite these challenges, the future with autonomous AI agents holds tremendous promise. As technology continues to evolve, these agents will become increasingly integrated into our daily lives.

I’m currently the Chief Evangelist @ Kore AI. I explore & write about all things at the intersection of AI & language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.