Runtime Tool Development for AI Agents

Is it the future of AI Agent Integration?

In Short

The terms tools, functions and skills are used interchangeably.

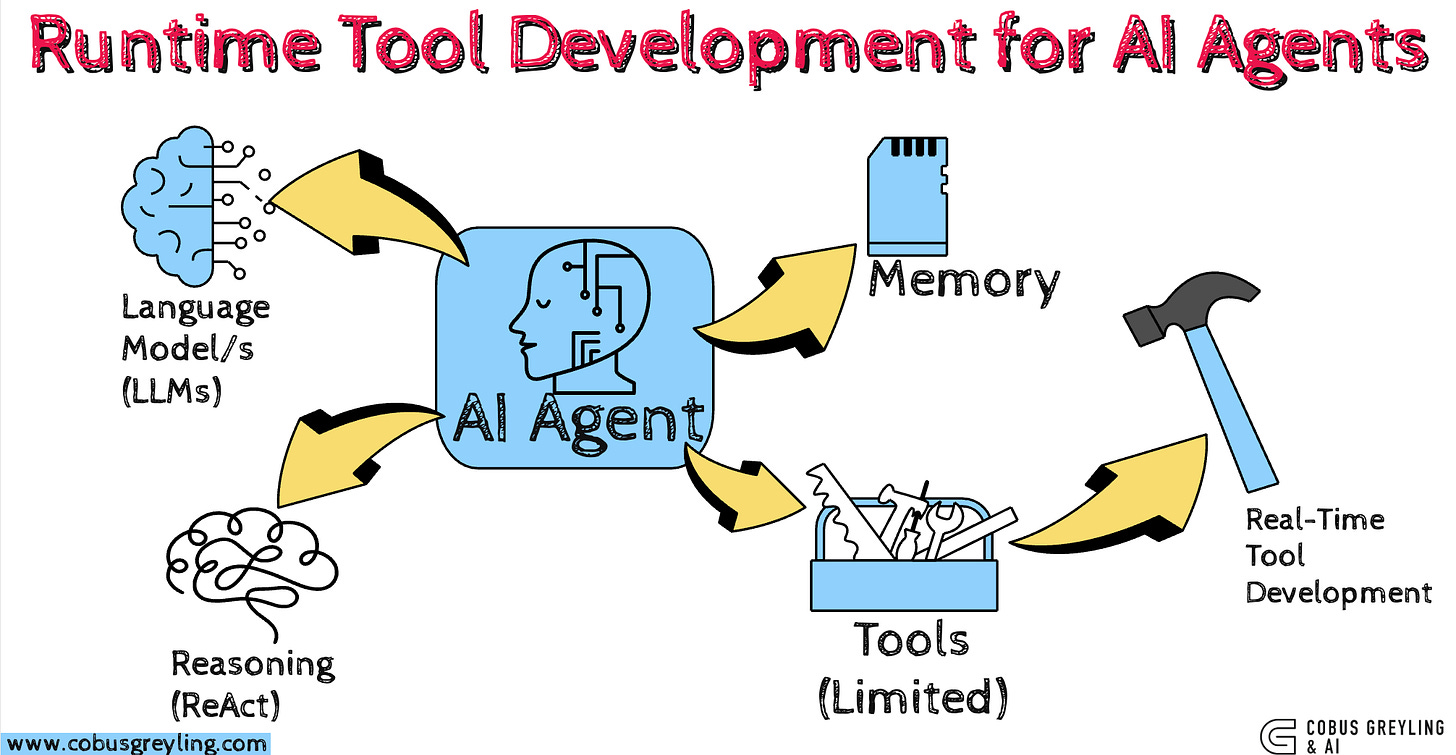

Tools serve as the hands and feet of AI Agents and act as the integration points.

MCP attempts to make this integration analogous to a digital USB-C.

But the power and effectiveness of AI Agents rest on the control or integration they have to the outside world other systems etc.

Tool search and ranking are growing in importance, especially when one single AI Agent has many tools at its disposal.

Anthropic advocates don’t build agents build skills where the LLM creates writes the skill or tool required for the task.

The ideal remains for the AI Agent to write tools (integrations) as required on demand.

A recent study delivers exactly this capability in practice.

Test-Time Tool Evolution enables AI Agents to synthesise, verify, and refine executable tools during inference, achieving 62% accuracy.

Recent research highlights that developers prioritise control and oversight when using AI.

Most treat AI primarily as an advanced auto-completor, where strong context awareness and planning remain essential.

AI Agents incorporate these elements, yet concerns exist around instability introduced by autonomous behaviour and risks associated with self-coding.

Docker containers and sandboxes remain popular mechanisms for containing AI-driven code development.

Tools for AI Agents

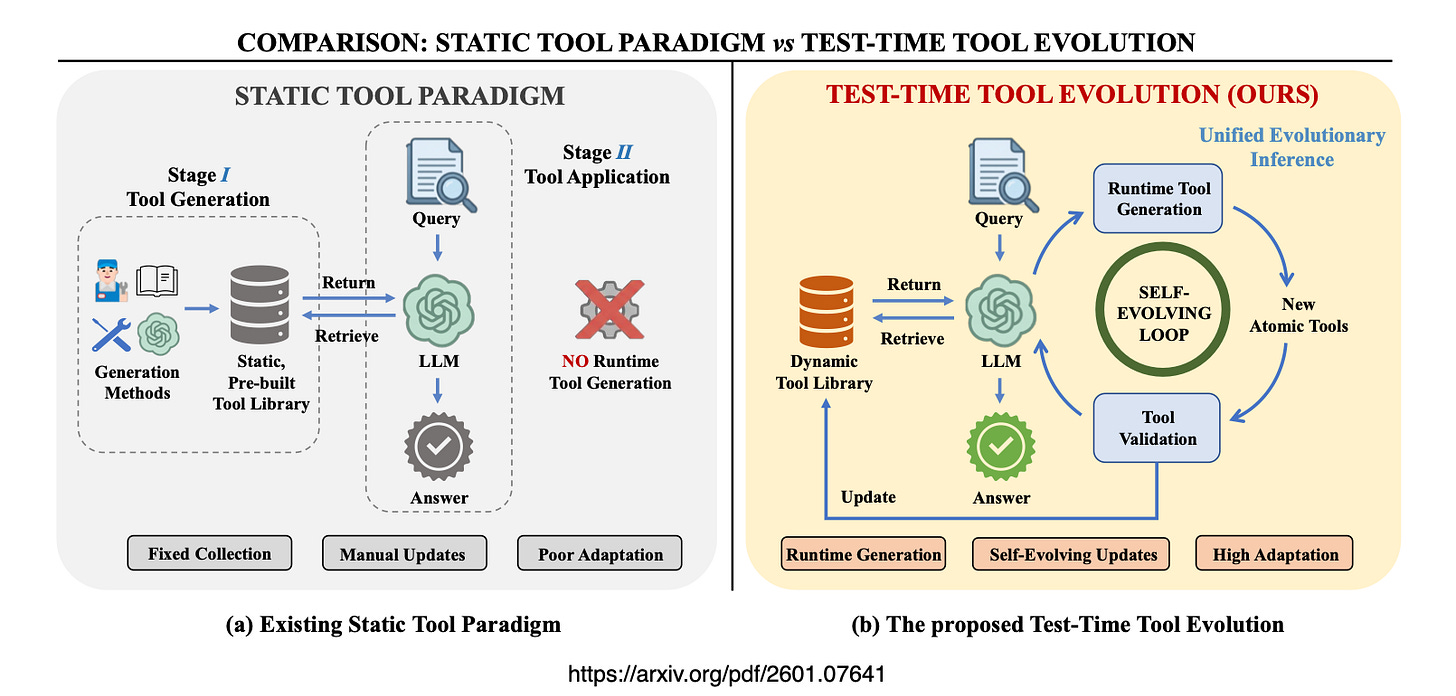

The study is advocating a shift from rigid pre-defined tool libraries to dynamic on-demand creation.

AI Agents start with an empty library generate problem-specific Python functions.

Then validate them in isolated environments…

And decompose into reusable atomic units.

Static Tools Limit AI Agents

The study says current tool systems for AI Agents have two big problems that stop them from working well in science…

Tools are too few, scattered and different from each other

Scientific tools are rare not neatly organised and very different from one project to the next.

Because of that nobody can collect and prepare a complete ready-made list of tools by hand.

It is simply impossible to build a full library ahead of time.

Ready-made lists cannot handle completely new problems

When a question comes up that needs a brand-new calculation step, no pre-made tool exists for it.

The AI Agent can only pick from what is already in the box.

It stays passive like someone choosing from an old toolbox instead of inventing the right tool for the job.

These two weaknesses create a hard limit.

Static tool libraries keep AI Agents from truly discovering solutions to new scientific problems.

The paper introduces Test-Time Tool Evolution (TTE) so the AI Agent can write verify and improve its own tools while solving the problem.

This turns the AI Agent from a passive user of tools into an active creator of exactly what it needs.

TTE overcomes both limitations by generating tools at runtime aligned precisely with the current problem.

Runtime Tool Writing

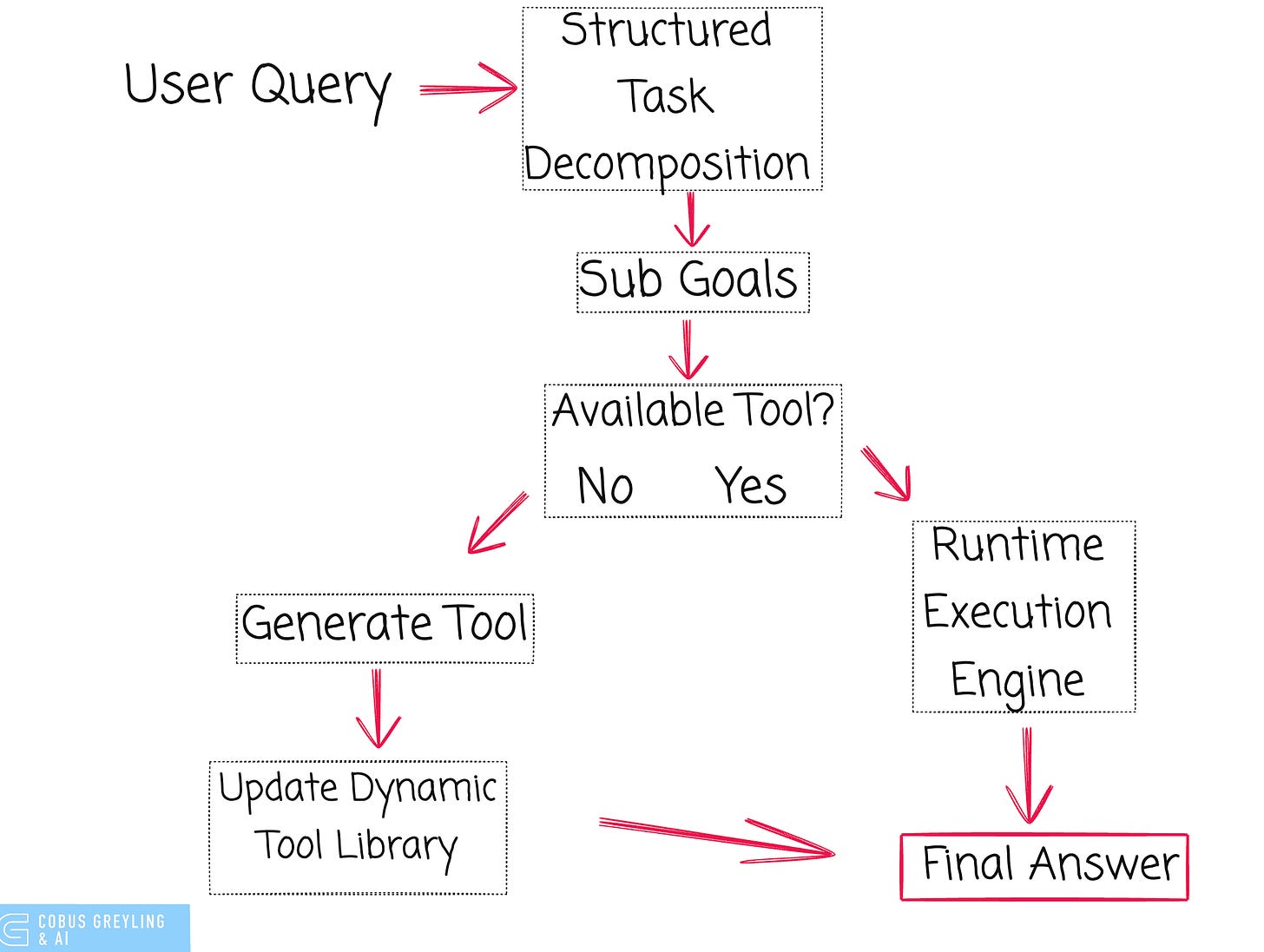

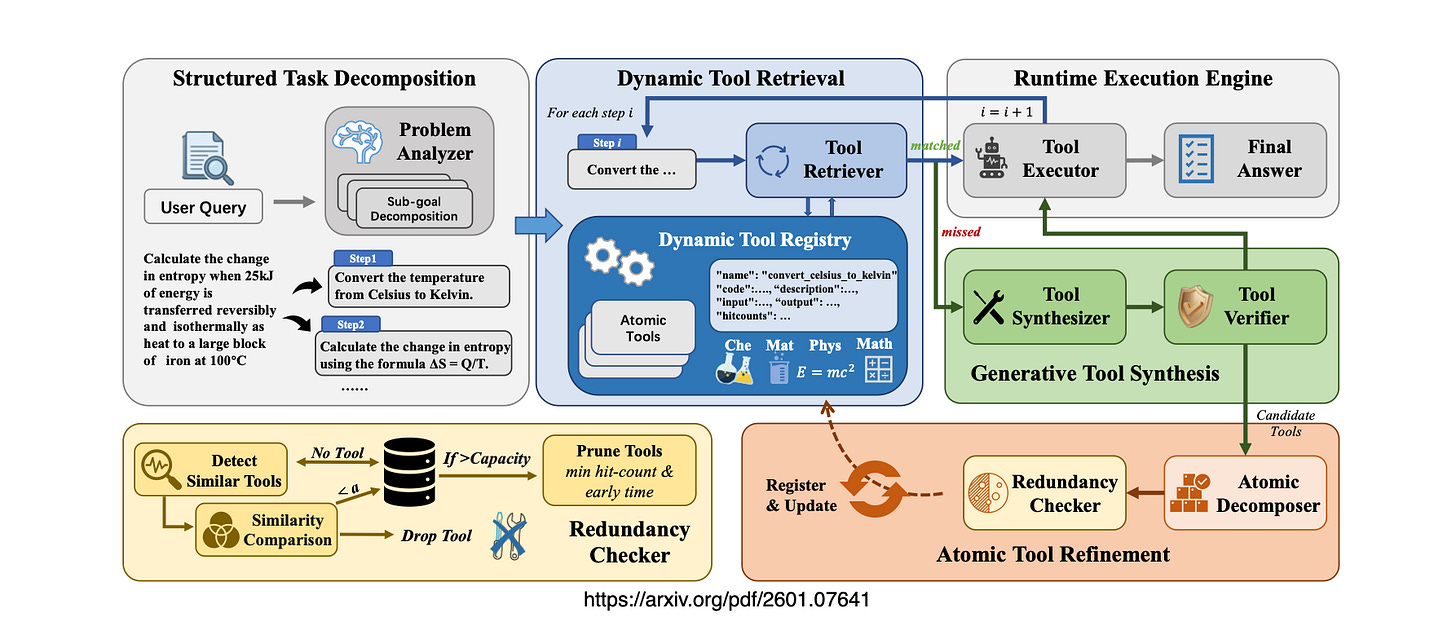

The studies proposal operates on a closed-loop workflow with five stages:

Structured Task Decomposition breaks complex scientific queries into computational sub-goals.

Dynamic Tool Retrieval searches the evolving library using semantic similarity via embeddings and reranking.

Generative Tool Synthesis creates new Python code through chain-of-thought reasoning when no match exists.

Atomic Tool Refinement validates code in Docker environments decomposes functions into reusable atomic units deduplicates via code embeddings and applies LRU eviction to maintain a 500-tool cap.

Runtime Execution Engine executes the tool sequence produces the answer and updates the library for future reuse.

The system bootstraps from scratch or adapts existing tools across domains.

Alignment with Anthropic’s Skills Philosophy

Anthropic’s “don’t build agents build skills” approach emphasises reusable composable capabilities that AI Agents load dynamically.

Skills package procedural knowledge allowing one agent to gain domain expertise without rebuilding entire agent flows.

The stduy implements this “make” strategy in scientific contexts…the AI Agent writes, verifies and accumulates tools from experience mirroring the vision of agents authoring their own capabilities at runtime.

Evidence from the Study

Accuracy outperforms Basic Chain-of-Thought by +29% and domain-specific baselines like CheMatAgent by +6% on benchmarks.

Tool Reuse Rate demonstrates efficiency with near-zero disposable code and strong consolidation of reusable primitives.

SciEvo Benchmark comprises 1,590 tasks built around the evolved tools ensuring perfect alignment between tools and problems.

Cross-Domain Transfer repurposes primitives from materials science to chemistry without negative transfer.

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.