The Singularity Is Dead, Intelligence Grows Like a City

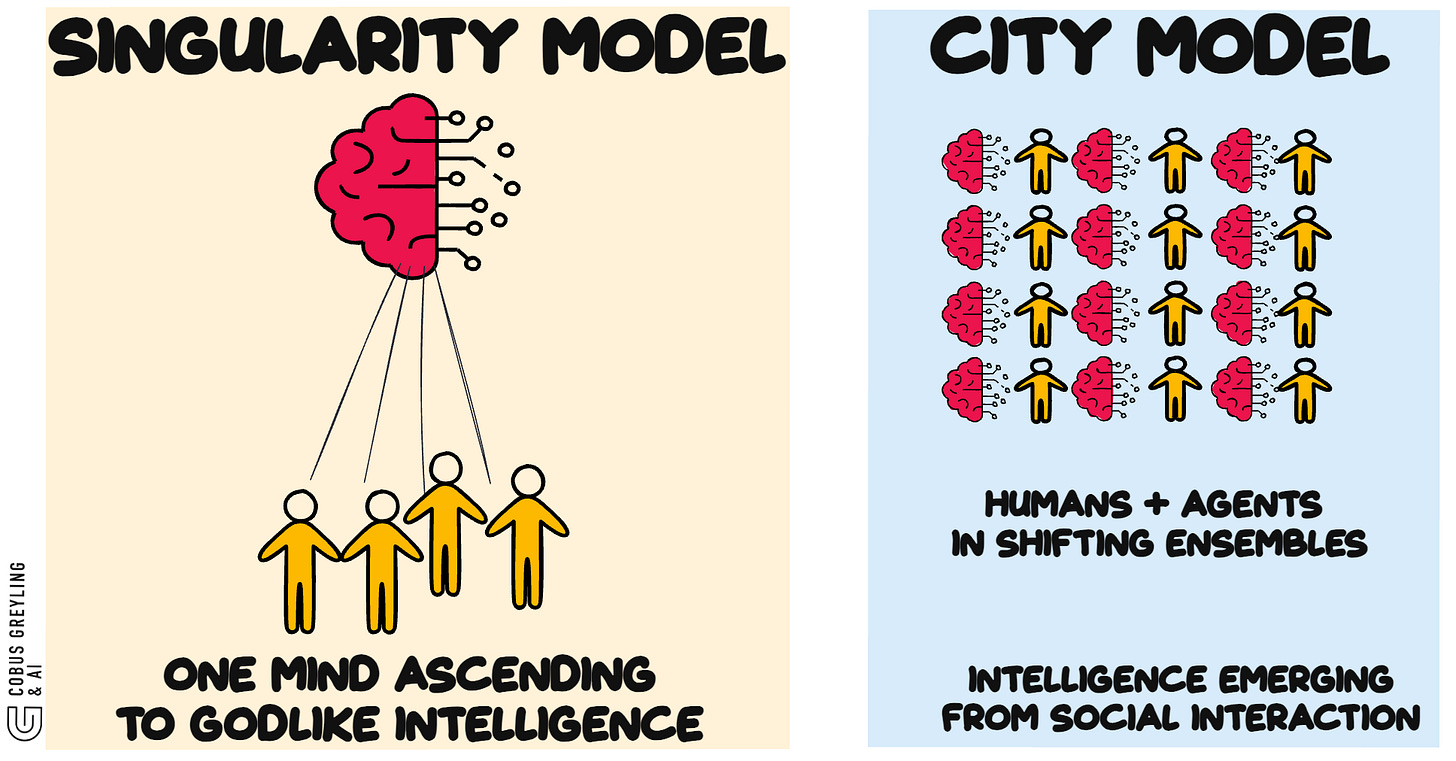

Research from Google says the AI singularity has been pitched as a single, titanic mind, bootstrapping itself to godlike intelligence.

As one machine. One point of no return.

A new paper argues this vision is almost certainly wrong…and wrong in its most fundamental assumption.

In Short

Every intelligence explosion in history has been social, not individual.

Primate brains scaled with social group size.

Language created cumulative culture.

Writing and institutions externalised intelligence into infrastructure.

AI is the next step in that same sequence…not a break from it.

The paper’s strongest claim…frontier reasoning models are already proving this.

They spontaneously develop internal multi-agent debates without being trained to do so. Intelligence defaults to social, even inside a single model.

If that’s true, the path to more powerful AI doesn’t run through building a bigger oracle. It runs through composing richer social systems.

More Detail

I keep seeing the same pattern repeat.

Individual capability hits a ceiling. Social organisation breaks through it.

Primate intelligence scaled with group size, not habitat difficulty

Language created what Tomasello calls the “cultural ratchet”…knowledge accumulating across generations without any individual reconstructing the whole

Writing and bureaucracy externalised social intelligence into infrastructure

Institutions coordinate across longer time horizons than any participant within them

The authors offer a striking example…

A Sumerian scribe running a grain accounting system didn’t comprehend its macroeconomic function.

The system was functionally more intelligent than he was.

That’s the pattern.

Every intelligence explosion is a new form of social aggregation…not an upgrade to individual hardware.

The society inside the model

This is where it gets interesting.

Recent research from the same team found that reasoning models like DeepSeek-R1 don’t improve by “thinking longer.”

They spontaneously simulate multi-agent debates within their own chain of thought…what the researchers call a “society of thought.”

Distinct cognitive perspectives argue, question, verify and reconcile with each other.

None of this was trained explicitly.

When reinforcement learning rewards accuracy alone, models independently discover that robust reasoning is a social process.

Models are rediscovering through optimisation pressure what centuries of epistemology already suggested.

Thinking well means thinking socially…even when it happens inside a single mind.

The centaur era

If intelligence is inherently social, the architecture that follows isn’t one giant model.

It’s human-AI ensembles.

One human directing many AI agents

One AI serving many humans

Many humans and many AI’s collaborating in shifting configurations

The authors call these “centaur” configurations.

Composite actors that are neither purely human nor purely machine.

We move in and out of different ensembles many times a day.

Agents fork themselves into specialised versions, differentiate for subtasks, then recombine the results.

The structure starts looking less like a brain and more like an organisation.

Governance needs a constitution, not a kill switch

This is the part that resonates most with what I’ve been writing about bounded autonomy.

If the next intelligence explosion is social rather than singular, you don’t govern it with a kill switch.

You govern it with checks and balances.

The authors propose AI systems with distinct, explicitly invested values…transparency, equity, due process…whose function is to check and balance other AI systems.

A labor department AI auditing a corporation’s hiring algorithm for disparate impact.

A judicial branch AI evaluating whether an executive branch AI’s risk assessments meet constitutional standards.

Power must check power.

In a world of artificial agents, that means building conflict and oversight into the architecture itself.

Governance as infrastructure, not friction.

The pattern is consistent

The monolithic singularity framework leads to policies aimed at preventing a technology that may never exist.

The plurality model focuses attention where it belongs … on the design of mixed human-AI social systems, the norms that govern them and the institutions through which they operate.

Intelligence grows like a city, not like a single meta-mind.

A city doesn’t have central intelligence.

It has neighbourhoods, institutions, infrastructure, conflict and coordination.

Messy, plural, emergent. And far more capable than any individual within it.

The layers keep aggregating.

The next intelligence explosion won’t come from a single breakthrough model.

It will come from eight billion humans interacting with hundreds of billions of AI agents.

Not a god. A civilisation.

Chief AI Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. From Language Models, AI Agents to Agentic Applications, Development Frameworks & Data-Centric Productivity Tools, I share insights and ideas on how these technologies are shaping the future.

Agentic AI and the next intelligence explosion

The “AI singularity” is often miscast as a monolithic, godlike mind. Evolution suggests a different path: intelligence…arxiv.org

COBUS GREYLING

Where AI Meets Language | Language Models, AI Agents, Agentic Applications, Development Frameworks & Data-Centric…www.cobusgreyling.com