Three AI Agent Architectures Have Emerged

AI Agents evolved from simple chatbots to autonomous systems capable of complex, goal-oriented tasks & several distinct architectures have emerged.

Obviously there is an ongoing debate in the field about scalability, specialisation, reliability and flexibility.

In this post I want to outline the three approaches I have seen emerging…

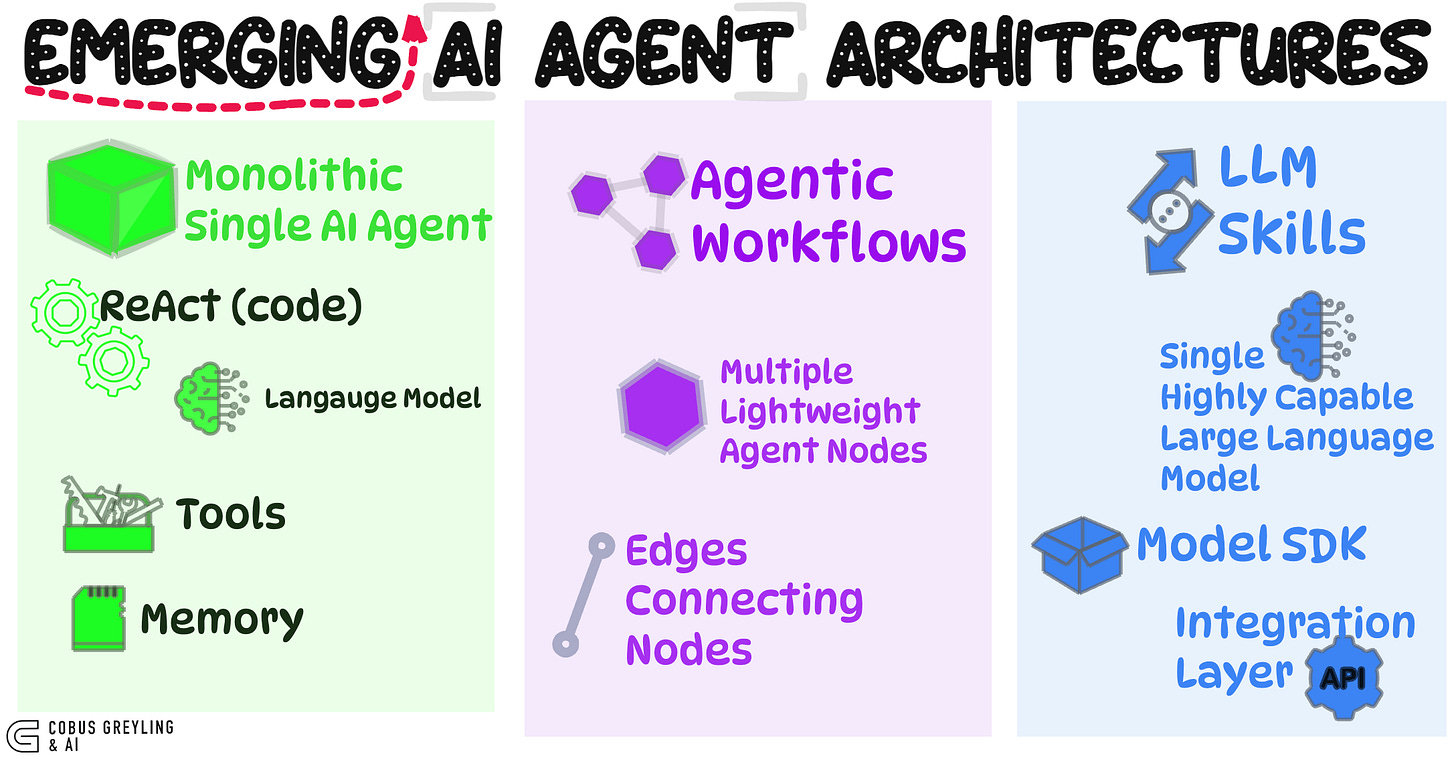

Monolithic Single AI Agent

This was the earliest dominant approach, popularised by frameworks like Auto-GPT and early ReAct (Reason + Act) implementations.

Core Idea

A single powerful LLM serves as the “brain,” equipped with a set of tools (also called functions or plugins).

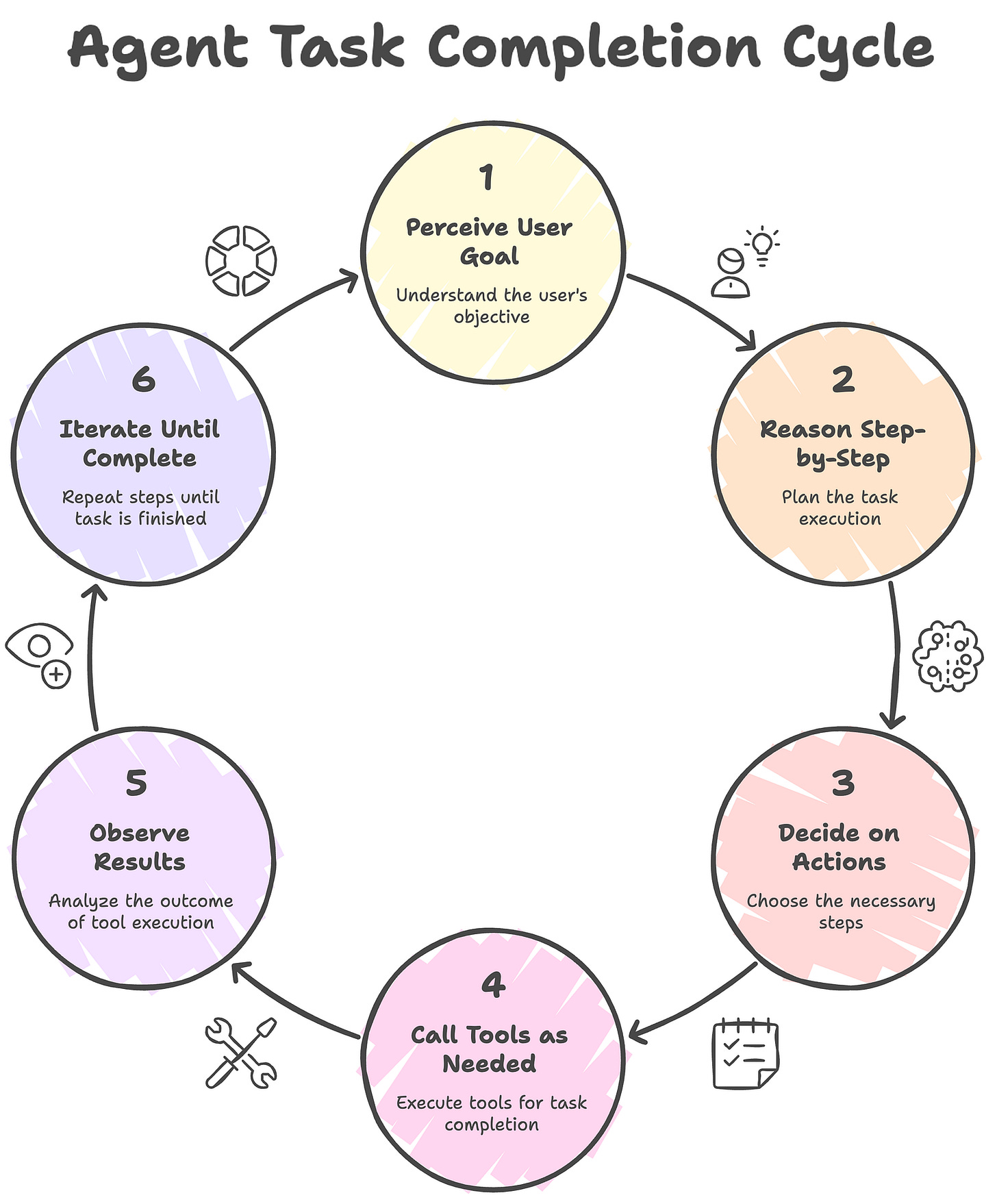

The agent perceives a user goal, reasons step-by-step, decides on actions, calls tools as needed, observes results, and iterates until the task is complete.

What are tools?

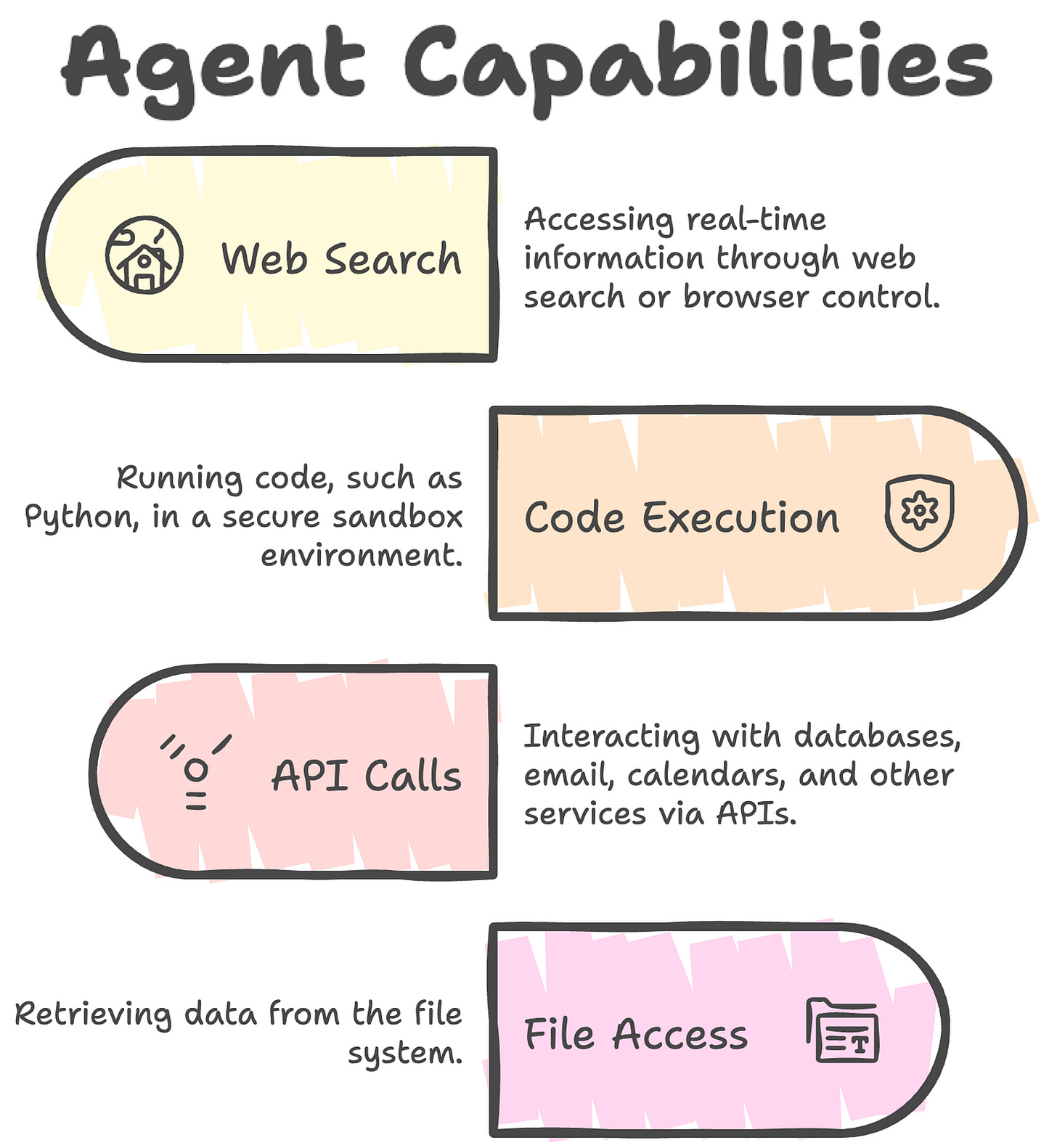

I would say tools are external functions the LLM can invoke to extend its capabilities beyond pure text generation. Examples include:

Web search or browser control for real-time information.

Code execution (e.g., running Python in a sandbox).

API calls (e.g., to databases, email, or calendars).

File system access or data retrieval.

The LLM doesn’t execute code itself — it outputs a structured request for a specific tool with parameters, the system runs it, and feeds the result back.

Considerations

As tasks grew more complex, agents needed more tools.

This led to the tool explosion problem: too many tools overwhelm the LLM’s context window, reduce reliability and make tool selection error-prone.

Research showed an optimal range of ~10–20 tools per agent before performance degrades significantly, prompting the question: when do you add more agents instead of more tools?

This architecture is simple and effective for prototyping but struggles with highly specialised or long-horizon tasks.

Agentic Workflows (Multi-Agent Orchestration)

This approach shifts from one generalist agent to orchestrated teams of lighter, specialised agents.

Core Idea

Multiple purpose-built agents act as nodes in a directed graph or workflow.

Each node handles a specific sub-task (research, planning, execution, critique).

Data flows along “edges” (conditional transitions), creating structured pipelines.

Origins and Examples

OpenAI launched AgentKit (including Agent Builder), a visual/no-code canvas for composing multi-agent workflows, with versioning, guardrails, and easy deployment.

Other frameworks like LangGraph, AutoGen, and Semantic Kernel support graph-based orchestration.

Considerations

It introduces some rigidity (predefined paths) compared to fully autonomous single agents, which can feel like a step backto traditional workflow automation.

However, it enables reliability, parallelism (running nodes concurrently), cost efficiency (using smaller models per node), and easier debugging/monitoring.

It’s ideal for enterprise scenarios needing predictability and multi-model orchestration.

This is now a dominant pattern in 2025, especially for production systems.

LLM Skills (Modular Capabilities via Pro-Code Extensions)

Here, the focus is on extending a core LLM with reusable, composable “skills” rather than proliferating agents or rigid tools.

Core Idea

Skills are packaged knowledge + code (instructions, scripts, templates) that the LLM loads dynamically when relevant.

They provide domain expertise, procedural workflows, or code execution without needing full multi-agent setups.

Key Developments

Anthropic pioneered Agent Skills: Modular folders (with SKILL.md files) containing instructions and optional scripts.

Claude loads them progressively (token-efficient) and uses them for tasks like document manipulation or custom organisational playbooks.

Researchers argue this is superior to building endless specialised agents — focus on a universal agent + skill library instead.

OpenAI supports parallel and chained function calling natively (multiple tools in one response, or sequential over turns).

Their Agents SDK allows code-first orchestration, including running functions concurrently.

xAI (Grok, the xAI SDK and Agent Tools API provide lightweight extensions for tools like web/X search, code execution, and file interaction — often server-side for simplicity.

Considerations

Skills blur the line between tools and agents (and the model to some degree) by making extensions more contextual and reusable.

They reduce cognitive load (fewer overlapping tools) and enable local/file-based actions (like code writing for goals). Parallel/chaining support makes them dynamic, but they rely on pro-code SDKs for advanced use.

This architecture emphasises composability and expertise injection, aligning with trends toward modular, skill-based systems over pure agent proliferation.

Overall Trends

In 2025, the field has largely moved beyond pure monoliths toward hybrids: workflows for orchestration + skills for specialisation.

Leading surveys and industry reports highlight a clear shift away from pure monolithic agents toward modular, multi-agent, and hybrid designs.

The field recognises that no single architecture dominates all use cases — instead, the optimal choice depends on requirements like reliability, scalability, cost, and task complexity.

Monolithic agents remain useful for rapid prototyping and simple tasks, but multi-agent workflows and skill libraries address their limitations in specialisation and long-horizon reliability.

By late 2025, hybrids are prevailing, orchestrated workflows with injected skills for domain expertise, powered by efficient models (like NVIDIA Nemotron series for multi-agent).

Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.

The ~10-20 tool optimal range tracks with what Cursor found. They enforce a hard cap of 40 because quality tanks beyond that. I recently compared the same web scraping API offered as both an MCP server and a CLI+Skills package; the token efficiency gap was huge. Full repo dissection of both approaches: https://reading.sh/firecrawl-for-ai-agents-skills-vs-mcp-servers-for-web-scraping-051b701b28f9

The scalability limit you identify (~10-20 tools per agent) matches exactly what I hit when rebuilding my autonomous agent (Wiz). Beyond that threshold, context management breaks down and the agent starts making poor tool selection decisions.

Your three patterns - monolithic single agents, agentic workflows, LLM skills - map directly to the evolution I went through. Started monolithic (everything in one agent), moved to workflows (spawn subagents for specific tasks), now experimenting with skills (specialized capabilities the agent can invoke).

What I'm still figuring out is when to use which pattern. Subagents for parallelizable research tasks works well. Skills for domain-specific operations (Notion, email, Discord) makes sense. But the orchestration layer - deciding which pattern to use when - remains more art than science.

I documented this architectural evolution when I rebuilt Wiz from scratch: https://thoughts.jock.pl/p/ai-agent-self-extending-self-fixing-wiz-rebuild-technical-deep-dive-2026 - curious if the hybrid approaches you mention have solved this orchestration problem.