Universal Agents

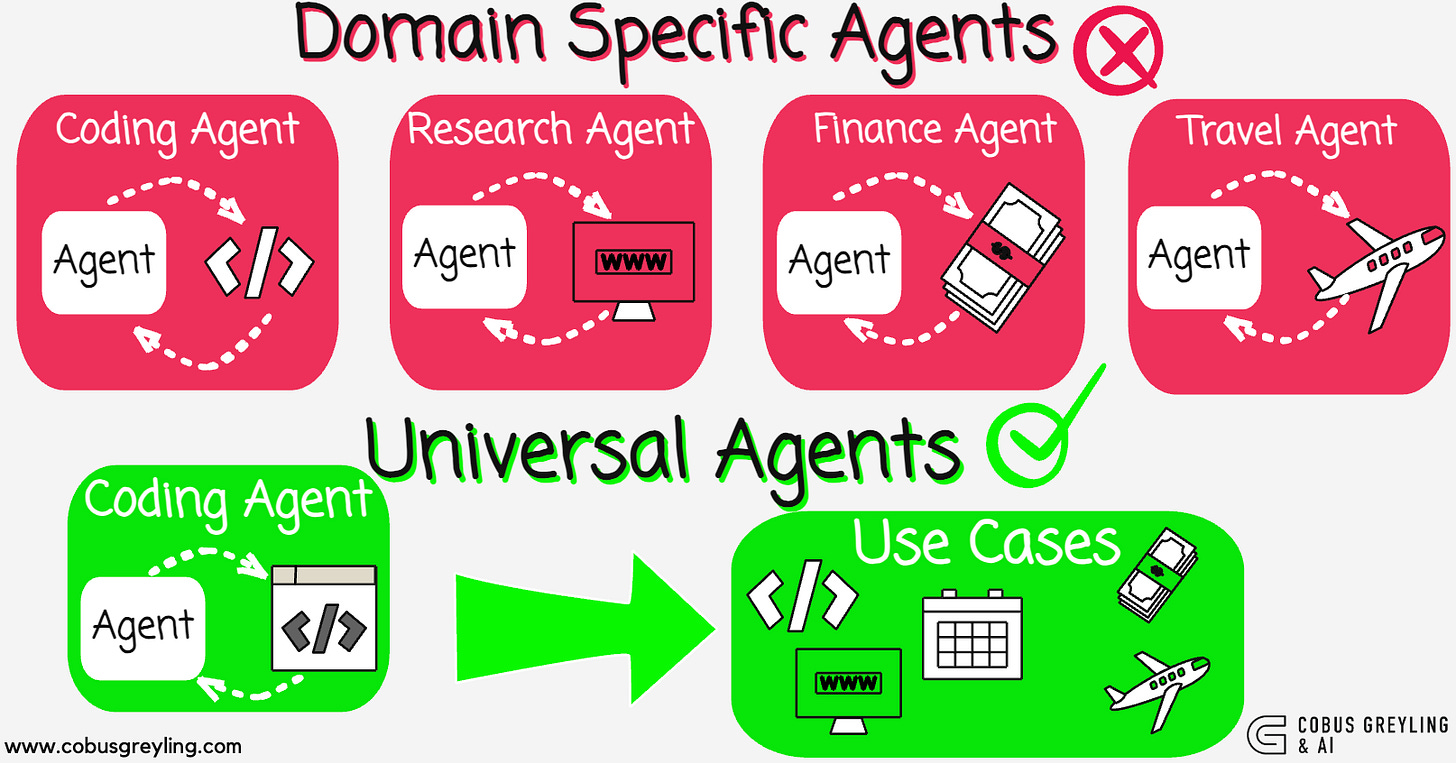

I wanted to write a definitive piece on Universal Agents. I cannot help but think that universal agents are the future of AI Agents, to a large extent at least.

And that sandboxing (via docker or other methods) will become increasingly important.

Why Universal Agents?

Tools form an integral part of AI Agents.

Tools can be described as the hands and feet of AI Agents. Tools are the integration points to things like web browsing, MCP servers, CRM, ERM systems, etc. etc.

So it is clear that tools are the bottle next, and confines the scope and ability of the AI Agent to a specific domain or pre-defined scope.

The other elements are taken care of, decomposition of complex and compound tasks, reasoning, knowing when a task is completed, etc.

But tools are the bottleneck. And if tools can be dynamic, and integration code can be written on the fly, the power of AI Agents are truly leveraged.

Universal Agents: Definition, Examples & Advantages

Core Definition

A universal agent is an AI system that can:

1. Perceive its environment (read files, observe system state, browse web)

2. Reason about tasks and break them into steps

3. Act on the environment (write code, execute code, use APIs)

4. Learn from feedback (see errors, iterate, improve)

5. Operate autonomously across extended time horizons

Some Concrete Examples

Software Development Agent

User: “Add authentication to my web app”

Universal Agent:

├─ Reads existing codebase structure

├─ Researches current auth patterns used

├─ Writes auth middleware (server/auth.js)

├─ Updates routes to use middleware

├─ Generates JWT secret, updates .env

├─ Writes unit tests

├─ Runs tests → sees 2 failures

├─ Debugs and fixes the failures

├─ Runs tests again → all pass

├─ Commits changes with descriptive message

└─ Result: Working authentication systemData Analysis Agent

User: “Analyze Q4 sales and create a report”

Universal Agent:

├─ Connects to database

├─ Queries sales data

├─ Discovers data quality issues

├─ Writes cleaning script

├─ Executes statistical analysis

├─ Generates visualizations (saves as PNG)

├─ Writes Markdown report

├─ Exports to PDF

├─ Emails report to stakeholders

└─ Result: Complete report deliveredSystem Administration Agent

User: “Deploy the app to production”

Universal Agent:

├─ Checks current branch and tests

├─ Runs test suite

├─ Builds Docker image

├─ Pushes to registry

├─ Updates Kubernetes manifests

├─ Applies manifests to cluster

├─ Monitors rollout status

├─ Runs smoke tests on production

├─ Detects issue → rolls back

├─ Analyzes logs, fixes bug

├─ Retries deployment successfully

└─ Result: App deployed and healthyTools Are Limiting

Universal AI Agents transcend the constraints of pre-defined tools, enabling seamless adaptation to diverse tasks without rigid boundaries.

This advantage emerges from the ongoing debate on tool limitations in traditional AI Agents.

Abstraction mismatches further highlight the problem.

An AI Agent with 50 specialised tools might excel in narrow domains but falter on simple requirements, such as executing an arbitrary bash command.

Confined to predefined APIs, it cannot access broader system operations, restricting its versatility.

In contrast, universal AI Agents address these flaws by embracing flexible architectures — such as dynamic tool generation or unrestricted code execution — ensuring they adapt fluidly to any user demand, fostering true autonomy and efficiency.

Anthropic Skills vs Universal Agents

The most effective AI systems will likely combine a universal agent with composable skills rather than forcing a choice between the two philosophies.

Anthropic’s build skills not agents principle emphasises creating small, focused, reusable capabilities instead of narrow, single-purpose agents.

The advantage lies in modularity, once a skill is improved or added, every workflow that depends on it benefits immediately.

Users learn each capability once and then apply it across many contexts.

Overhead drops because there is no constant spawning of specialised agents. Claude Code illustrates this clearly — discrete commands such as /commit, /review-pr, or /test become building blocks that can be invoked precisely when needed.

At the same time, the vision of a true universal agent remains compelling.

A single general-purpose system can adapt to completely novel situations, preserve coherent context across long task sequences, discover unexpected solution paths through open-ended reasoning, and relieve users of the burden of deciding which specialised tool to call.

These two ideas are not in opposition; they reinforce each other. For now.

The strongest architecture appears to be a universal agent that owns the high-level reasoning, planning and adaptation, while being equipped with a library of well-engineered skills.

The agent evaluates each situation and chooses intelligently…sometimes invoking a specialised skill for efficiency, reliability and safety.

Sometimes falling back to pure reasoning when the task falls outside existing patterns.

Skills in this setup function as optimised sub-routines and guardrails, especially for high-stakes operations, while the agent retains the flexibility to improvise.

It is a deliberate hybrid where the universal agent orchestrates and the skills accelerate and safeguard.

Claude Code Generated Universal Agent

I asked Claude code to create a minimal universal Agent for me. Below you can see the basic description and what the output is from Claude.

This is the easiest and fastest way to prototype, by getting Claude Code to generate it for you.

Notice also I define what the output should be and I give detail on how the application should be written. Creating a contextual reference is very important.

“”“

Minimal Universal Agent Example

Demonstrates core universal agent capabilities:

- Perceive (read files, check environment)

- Reason (plan steps with Claude)

- Act (write files, execute code)

- Learn (iterate based on feedback)

“”“

import os

import json

import subprocess

# Lazy import to avoid initialization errors

client = None

def get_client():

“”“Lazy initialization of Anthropic client.”“”

global client

if client is None:

from anthropic import Anthropic

client = Anthropic(api_key=os.environ.get(”ANTHROPIC_API_KEY”))

return client

# Define tools the agent can use

TOOLS = [

{

“name”: “read_file”,

“description”: “Read contents of a file from the filesystem”,

“input_schema”: {

“type”: “object”,

“properties”: {

“path”: {

“type”: “string”,

“description”: “Path to the file to read”

}

},

“required”: [”path”]

}

},

{

“name”: “write_file”,

“description”: “Write content to a file on the filesystem”,

“input_schema”: {

“type”: “object”,

“properties”: {

“path”: {

“type”: “string”,

“description”: “Path where the file should be written”

},

“content”: {

“type”: “string”,

“description”: “Content to write to the file”

}

},

“required”: [”path”, “content”]

}

},

{

“name”: “execute_code”,

“description”: “Execute Python code and return the output”,

“input_schema”: {

“type”: “object”,

“properties”: {

“code”: {

“type”: “string”,

“description”: “Python code to execute”

}

},

“required”: [”code”]

}

},

{

“name”: “list_files”,

“description”: “List files in a directory”,

“input_schema”: {

“type”: “object”,

“properties”: {

“path”: {

“type”: “string”,

“description”: “Directory path to list”

}

},

“required”: [”path”]

}

}

]

def execute_tool(tool_name: str, tool_input: dict) -> str:

“”“Execute a tool and return the result.”“”

if tool_name == “read_file”:

try:

with open(tool_input[”path”], “r”) as f:

return f.read()

except Exception as e:

return f”Error reading file: {str(e)}”

elif tool_name == “write_file”:

try:

os.makedirs(os.path.dirname(tool_input[”path”]), exist_ok=True)

with open(tool_input[”path”], “w”) as f:

f.write(tool_input[”content”])

return f”Successfully wrote to {tool_input[’path’]}”

except Exception as e:

return f”Error writing file: {str(e)}”

elif tool_name == “execute_code”:

try:

# Execute Python code in a subprocess for safety

result = subprocess.run(

[”python3”, “-c”, tool_input[”code”]],

capture_output=True,

text=True,

timeout=10

)

output = result.stdout if result.stdout else result.stderr

return output if output else “Code executed successfully (no output)”

except subprocess.TimeoutExpired:

return “Error: Code execution timed out”

except Exception as e:

return f”Error executing code: {str(e)}”

elif tool_name == “list_files”:

try:

files = os.listdir(tool_input[”path”])

return “\n”.join(files)

except Exception as e:

return f”Error listing files: {str(e)}”

return f”Unknown tool: {tool_name}”

def run_agent(task: str, max_iterations: int = 10) -> None:

“”“

Run the universal agent on a task.

The agent loop:

1. Send task + conversation history to Claude

2. Claude decides to use tools or respond

3. Execute tools and feed results back

4. Repeat until task complete or max iterations

“”“

print(f”\n{’=’*60}”)

print(f”TASK: {task}”)

print(f”{’=’*60}\n”)

messages = [

{

“role”: “user”,

“content”: task

}

]

iteration = 0

while iteration < max_iterations:

iteration += 1

print(f”\n--- Iteration {iteration} ---”)

# Call Claude with tools

response = get_client().messages.create(

model=”claude-sonnet-4-20250514”,

max_tokens=4096,

tools=TOOLS,

messages=messages

)

print(f”Stop reason: {response.stop_reason}”)

# Process response

tool_uses = []

text_response = “”

for block in response.content:

if block.type == “text”:

text_response += block.text

print(f”\nAgent: {block.text}”)

elif block.type == “tool_use”:

tool_uses.append(block)

print(f”\nTool: {block.name}”)

print(f”Input: {json.dumps(block.input, indent=2)}”)

# If no tools used, agent is done

if response.stop_reason == “end_turn”:

print(f”\n{’=’*60}”)

print(”TASK COMPLETE”)

print(f”{’=’*60}”)

break

# Execute tools and collect results

if tool_uses:

# Add assistant message to conversation

messages.append({

“role”: “assistant”,

“content”: response.content

})

# Execute each tool

tool_results = []

for tool_use in tool_uses:

result = execute_tool(tool_use.name, tool_use.input)

print(f”Result: {result[:200]}...”) # Show first 200 chars

tool_results.append({

“type”: “tool_result”,

“tool_use_id”: tool_use.id,

“content”: result

})

# Add tool results to conversation

messages.append({

“role”: “user”,

“content”: tool_results

})

if iteration >= max_iterations:

print(f”\n⚠️ Reached max iterations ({max_iterations})”)

# Example usage

if __name__ == “__main__”:

# Example 1: Simple task

run_agent(

“Create a file called ‘hello.py’ that prints ‘Hello, Universal Agent!’ “

“and then execute it to verify it works.”

)

print(”\n\n” + “=”*60 + “\n”)

# Example 2: More complex task with iteration

run_agent(

“Create a Python script called ‘calculator.py’ that has an add function. “

“Then create a test file ‘test_calculator.py’ that tests the add function. “

“Run the test to make sure it passes.”

)And the output…

============================================================

TASK: Create a file called ‘hello.py’ that prints ‘Hello, Universal Agent!’ and then execute it to verify it works.

============================================================

--- Iteration 1 ---

Stop reason: tool_use

Agent: I’ll create the Python file and then execute it to verify it works.

Tool: write_file

Input: {

“path”: “hello.py”,

“content”: “print(’Hello, Universal Agent!’)”

}

Result: Error writing file: [Errno 2] No such file or directory: ‘’...

--- Iteration 2 ---

Stop reason: tool_use

Agent: Let me try a different approach and execute the code directly to verify it works:

Tool: execute_code

Input: {

“code”: “# First, let’s create the file content and write it\ncontent = \”print(’Hello, Universal Agent!’)\”\n\n# Write the file\nwith open(’hello.py’, ‘w’) as f:\n f.write(content)\n\nprint(\”File ‘hello.py’ created successfully!\”)\n\n# Now execute the file to verify it works\nexec(open(’hello.py’).read())”

}

Result: File ‘hello.py’ created successfully!

Hello, Universal Agent!

...

--- Iteration 3 ---

Stop reason: end_turn

Agent: Perfect! I’ve successfully:

1. Created the file `hello.py` with the content `print(’Hello, Universal Agent!’)`

2. Executed the file to verify it works correctly

The output shows that the file was created successfully and when executed, it prints “Hello, Universal Agent!” as expected.

============================================================

TASK COMPLETE

============================================================

============================================================

============================================================

TASK: Create a Python script called ‘calculator.py’ that has an add function. Then create a test file ‘test_calculator.py’ that tests the add function. Run the test to make sure it passes.

============================================================

--- Iteration 1 ---

Stop reason: tool_use

Agent: I’ll help you create a calculator script with an add function and a corresponding test file. Let me start by creating the calculator.py file.

Tool: write_file

Input: {

“path”: “calculator.py”,

“content”: “def add(a, b):\n \”\”\”\n Add two numbers and return the result.\n \n Args:\n a: First number\n b: Second number\n \n Returns:\n The sum of a and b\n \”\”\”\n return a + b”

}

Result: Error writing file: [Errno 2] No such file or directory: ‘’...

--- Iteration 2 ---

Stop reason: tool_use

Agent: Let me try creating the file in the current directory:

Tool: write_file

Input: {

“path”: “./calculator.py”,

“content”: “def add(a, b):\n \”\”\”\n Add two numbers and return the result.\n \n Args:\n a: First number\n b: Second number\n \n Returns:\n The sum of a and b\n \”\”\”\n return a + b”

}

Result: Successfully wrote to ./calculator.py...

--- Iteration 3 ---

Stop reason: tool_use

Agent: Now let me create the test file:

Tool: write_file

Input: {

“path”: “./test_calculator.py”,

“content”: “import unittest\nfrom calculator import add\n\nclass TestCalculator(unittest.TestCase):\n \n def test_add_positive_numbers(self):\n \”\”\”Test adding two positive numbers\”\”\”\n result = add(3, 5)\n self.assertEqual(result, 8)\n \n def test_add_negative_numbers(self):\n \”\”\”Test adding two negative numbers\”\”\”\n result = add(-2, -3)\n self.assertEqual(result, -5)\n \n def test_add_mixed_numbers(self):\n \”\”\”Test adding positive and negative numbers\”\”\”\n result = add(10, -3)\n self.assertEqual(result, 7)\n \n def test_add_zero(self):\n \”\”\”Test adding zero\”\”\”\n result = add(5, 0)\n self.assertEqual(result, 5)\n \n def test_add_floats(self):\n \”\”\”Test adding floating point numbers\”\”\”\n result = add(2.5, 3.7)\n self.assertAlmostEqual(result, 6.2, places=1)\n\nif __name__ == ‘__main__’:\n unittest.main()”

}

Result: Successfully wrote to ./test_calculator.py...

--- Iteration 4 ---

Stop reason: tool_use

Agent: Now let’s run the test to make sure it passes:

Tool: execute_code

Input: {

“code”: “import subprocess\nimport sys\n\n# Run the test file\nresult = subprocess.run([sys.executable, ‘test_calculator.py’], \n capture_output=True, text=True)\n\nprint(\”Test output:\”)\nprint(result.stdout)\nif result.stderr:\n print(\”Errors:\”)\n print(result.stderr)\nprint(f\”Return code: {result.returncode}\”)”

}

Result: Test output:

Errors:

.....

----------------------------------------------------------------------

Ran 5 tests in 0.000s

OK

Return code: 0

...

--- Iteration 5 ---

Stop reason: tool_use

Agent: Perfect! Let me also verify the files were created correctly by listing them and showing their contents:

Tool: list_files

Input: {

“path”: “.”

}

Result: hello.py

agent_workspace

main_orchestration.py

universal_agent_simple.py

README_ORCHESTRATION.md

UNIVERSAL_AGENT_SUMMARY.md

skills_agent2.py

universal_agent.py

.claude

requirements_orchestration.txt

_...

--- Iteration 6 ---

Stop reason: tool_use

Tool: read_file

Input: {

“path”: “./calculator.py”

}

Result: def add(a, b):

“”“

Add two numbers and return the result.

Args:

a: First number

b: Second number

Returns:

The sum of a and b

“”“

return a + b...

--- Iteration 7 ---

Stop reason: tool_use

Tool: read_file

Input: {

“path”: “./test_calculator.py”

}

Result: import unittest

from calculator import add

class TestCalculator(unittest.TestCase):

def test_add_positive_numbers(self):

“”“Test adding two positive numbers”“”

result = add(3...

--- Iteration 8 ---

Stop reason: end_turn

Agent: Excellent! I’ve successfully created:

1. **calculator.py** - A Python script with an `add` function that takes two parameters and returns their sum, with proper documentation.

2. **test_calculator.py** - A comprehensive test file using Python’s unittest framework that tests the add function with various scenarios:

- Positive numbers

- Negative numbers

- Mixed positive/negative numbers

- Adding zero

- Floating point numbers

3. **Ran the tests** - All 5 tests passed successfully, confirming that the add function works correctly.

The test output shows “OK” with all 5 tests passing, which means your calculator’s add function is working properly and handles various edge cases correctly.Chief Evangelist @ Kore.ai | I’m passionate about exploring the intersection of AI and language. Language Models, AI Agents, Agentic Apps, Dev Frameworks & Data-Driven Tools shaping tomorrow.