Updated: Emerging RAG & Prompt Engineering Architectures for LLMs

Large Language Models (LLMs) depend on unstructured data for input and output data is also unstructured and conversational.

Due to the highly unstructured nature of Large Language Models (LLMs), there are continuous thought and market shifts taking place on how to implement LLMs.

Due to the unstructured nature of human conversational language data, the input to LLMs are conversational and unstructured, in the form of Prompt Engineering.

And the output of LLMs is also conversational and unstructured; a highly succinct form of natural language generation (NLG).

LLMs introduced functionality to fine-tune and create custom models. And the initial primary approach to customising LLMs was creating custom models via fine-tuning.

This approach has fallen into disfavour for three reasons:

As LLMs have both a generative and predictive side. The generative power of LLMs is easier to leverage than the predictive power. If the generative side of LLMs are presented with contextual, concise and relevant data at inference-time, hallucination is negated.

Fine-tuning LLMs involves training data curation, transformation and LLM related cost. Fine-tuned models are frozen with a definite time-stamp and will still demand innovation around prompt creation and data presentation to the LLM.

When classifying text based on pre-defined classes or intents, NLU still has an advantage with built-in efficiencies.

I hasten to add that there has been significant advances in improving no-code to low-code UIs and fine-tuning costs. A prudent approach is to make use of a hybrid solution, drawing on the benefits of fine-tuning and RAG.

The aim of fine-tuning of LLMs is to engender more accurate and succinct reasoning and answers.

The proven solution to hallucination is using highly relevant and contextual prompts at inference-time, and asking the LLM to follow chain-of-thoughtreasoning. This also solves for one of the big problems with LLMs; hallucination, where the LLM returns highly plausible but incorrect answers.

Considering RAG

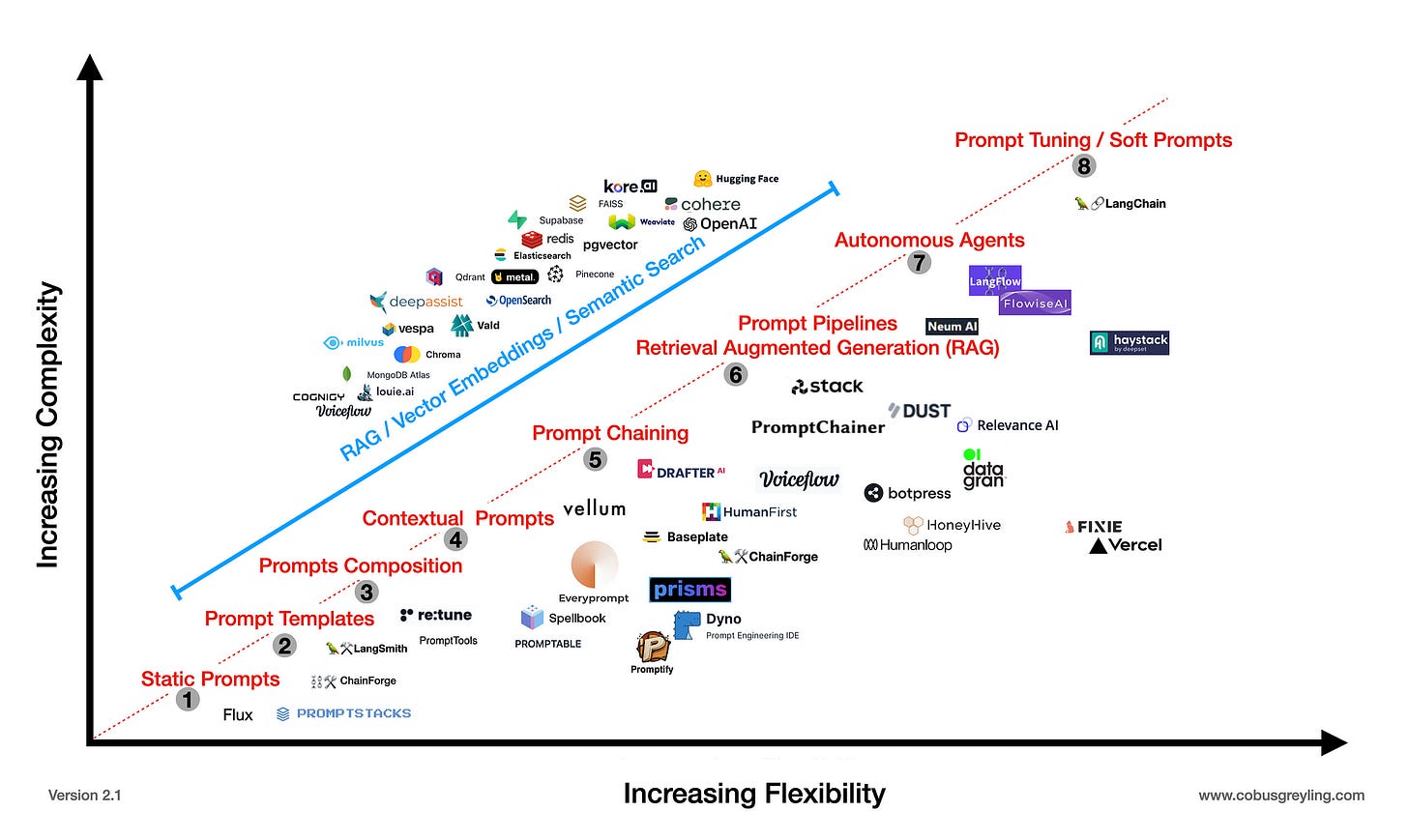

As seen below, there has been an emergence of vector stores / databases with semantic search, to provide the LLM with a contextual and relevant data snippet to reference.

Vector Stores, Prompt Pipelines and/or Embeddings are used to constitute a few-shot prompt. The prompt is few-shot because context and examples are included in the prompt.

In the case of Autonomous Agents, other tools can also be included like Python Math Libraries, Search and more. The generated response is presented to the user, and also used as context for follow-up or next-step queries or dialog turns.

The process of creating contextually relevant prompts are further aided by Autonomous Agents, prompt pipelines where a prompt is engineered in real-time based on relevant available data, conversation context and more.

Prompt chaining is a more manual and sequential process of creating a flow within a visual designer UI which is fixed and sequential and lacks the autonomy of Agents. There are advantages and disadvantages to both approaches; and both can be used in concert.

Lastly, an emerging field is testing different LLMs against a prompt; as opposed to in the past where we would focus on only testing various prompts against one single LLM. These tools include LangSmith, ChainForge and others.

The importance of determining the best suited model for a specific prompt addresses the notion that within enterprise implementations, multiple LLMs will be used.

⭐️ Follow me on LinkedIn for updates on Large Language Models ⭐️

I’m currently the Chief Evangelist @ Kore AI. I explore & write about all things at the intersection of AI & language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.